Detecting swimming pools with GPT4 Visual

The rise of off-the-shelf and foundation models has enabled AI teams to fine-tune existing models and pre-label data faster and more accurately — in short, significantly accelerating AI development. However, using these models at scale for building AI can quickly become expensive. One way to mitigate these costs and reduce waste is to add a model comparison process to your workflow, ensuring that any model you choose to integrate for AI development is the best choice for your requirements.

A comprehensive model comparison process evaluates models on various metrics, such as performance, robustness, and business fit. The results will enable teams to quickly kickstart model development, decrease time to value, and ensure the best results with less time and costs for their specific use case.

Embedding model comparison into the AI development workflow, however, comes with its own unique challenges, including:

- Confidently assessing the potential and limitations of pre-trained models

- Visualizing models’ performance for comparison

- Effectively sharing experiment results

In this blog post, we’ll explore how you can tackle these challenges for a computer vision use case with the Foundry add-on for Labelbox Model.

Why is selecting the right model critical?

Selecting the most suitable off-the-shelf model is pivotal for ensuring accurate and reliable predictions tailored to your specific business use case, often leading to accelerated AI development. As different models exhibit diverse performance characteristics, diligently comparing the models’ predictions on your data can help distinguish which model excels in metrics such as accuracy, precision, recall, and more. This systematic approach to model evaluation and comparison enables you to refine the model’s performance with a “store of record” for future reference to continuously improve model performance.

Choosing the best off-the-shelf model provides a quick and efficient pathway to production, ensuring that the model aligns well with the business objectives. This alignment is crucial for the model's immediate performance and sets the stage for future improvements and adaptability to evolving requirements. The most suitable model for your use case also enables you to reduce the time and money spent on labeling a project. For instance, when pre-labels generated by a high-performing model are sent for annotation, less editing is required, making the labeling project quicker and more cost-effective. This is due to better Intersection Over Union (IOU) for tasks like Bounding Box, resulting in higher quality pre-labels and, therefore, fewer corrections. Furthermore, utilizing the best model can make your trove of data more queryable by enriching your data, thereby enhancing its searchability.

Comparing vision LLMs models using Labelbox Model Foundry

This flow chart provides a high-level overview of the model comparison process when using Labelbox Model Foundry.

With the Foundry add-on for Labelbox Model, you can evaluate a range of models for computer vision tasks to select the best model to perform pre-labeling or data enrichment on your data.

Step 1: Select images and choose a foundation model of interest

- To narrow in on a subset of data, leverage Catalog’s filters including media attribute, a natural language search, and more, to refine the images on which the predictions should be made on.

- Once you’ve surfaced data of interest, click “Predict with Model Foundry”.

- You will then be prompted to choose a model that you wish to use in the model run.

- Select a model from the ‘model gallery’ based on the type of task - such as image classification, object detection, and image captioning.

- To locate a specific model, you can browse the models displayed in the list, search for a specific model by name, or select individual scenario tags to show the appropriate models available for the machine learning task.

Step 2: Configure model hyperparameters and submit a model run

Once you’ve located a specific model of interest, you can click on the model to view and set the model and ontology settings or prompt. In this case, we will enter the following prompt “Is there an entire swimming pool clearly visible in this image? Reply with yes or no only, without period.” This prompt is designed to facilitate responses from the model in a simple 'Yes' or 'No' format."

- Each model has an ontology defined to describe what it should predict from the data. Based on the model, there are specific options depending on the selected model and your scenario. For example, you can edit a model ontology to ignore specific features or map the model ontology to features in your own (pre-existing) ontology.

- Each model will also have its own set of hyperparameters, which you can find in the Advanced model setting.

- To get an idea of how your current model settings affect the final predictions, you can generate preview predictions on up to five data rows.

While this step is optional, generating preview predictions allows you to confidently confirm your configuration settings:

- If you’re unhappy with the generated preview predictions, you can make edits to the model settings and continue to generate preview predictions until you’re satisfied with the results.

- Once you’re satisfied with the predictions, you can submit your model run.

Step 3: Predictions will appear in the Model tab

Each model run is submitted with a unique name, allowing you to distinguish between each subsequent model run

- When the model run completes, you can:

- View prediction results

- Compare prediction results across a variety of model runs different models

- Use the prediction results to pre-label your data for a project in Labelbox Annotate

Step 4: Repeat steps 1-5 for another model from Model Foundry

- You can repeat steps 1-5 with a different model, on the same data and for the same desired machine learning task, to evaluate and compare model performance

- By comparing the predictions and outputs from different models, you can assess and determine which one would be the most valuable in helping automate your data labeling tasks

Step 5: Create a model run with predictions and ground truth

To create a model run with model predictions and ground truth, users currently have to use a script to import the predictions from the Foundry add-on for Labelbox Model and ground truth from a project into a new model run.

In the near future, this will be possible via the UI, and the script will be optional.

Step 6: Evaluate predictions from different models from Model foundry in Labelbox Model

After running the notebook, you'll be able to visually compare model predictions between two models.

- Use the ‘Metrics view’ to drill into crucial model metrics, such as confusion matrix, precision, recall, F1 score, and more, to surface model errors.

- Model metrics are auto-populated and interactive. You can click on any chart or metric to open up the gallery view of the model run and see corresponding examples.

Step 7: Send model predictions as pre-labels to a labeling project in Annotate

- Select the best performing model and leverage the model predictions as pre-labels.

- Rather than manually labeling data rows, select and send a subset of data to your labeling project with pre-labels to automate the process.

Model comparison in-practice:

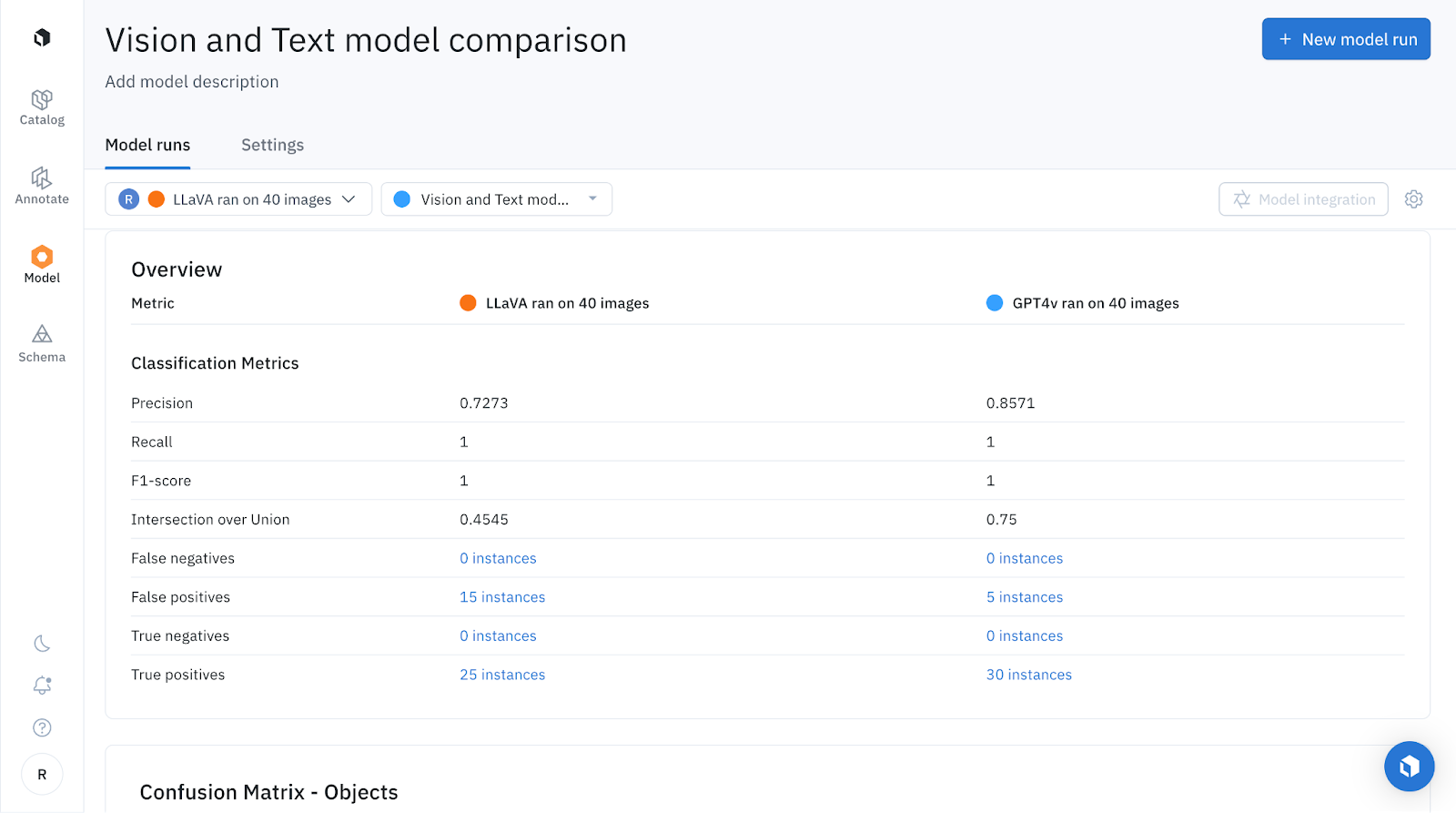

LLaVA vs GPT-4V

Quantitative comparison

In this example, LLaVA has 25 true positives, while GPT-4V has 30. Additionally, LLaVA has 10 more false positives. Therefore, GPT-4V is the superior model.

Qualitative comparison

Let’s now take a look at how the predictions appear on the images below:

In the examples above, GPT-4V and LLaVA both correctly predicted there to be a swimming pool.

In the two instances above, GPT-4V correctly identified the presence of a swimming pool, while LLaVA incorrectly predicted its absence. It's possible that LLaVA was misled by the shadows over the pool.

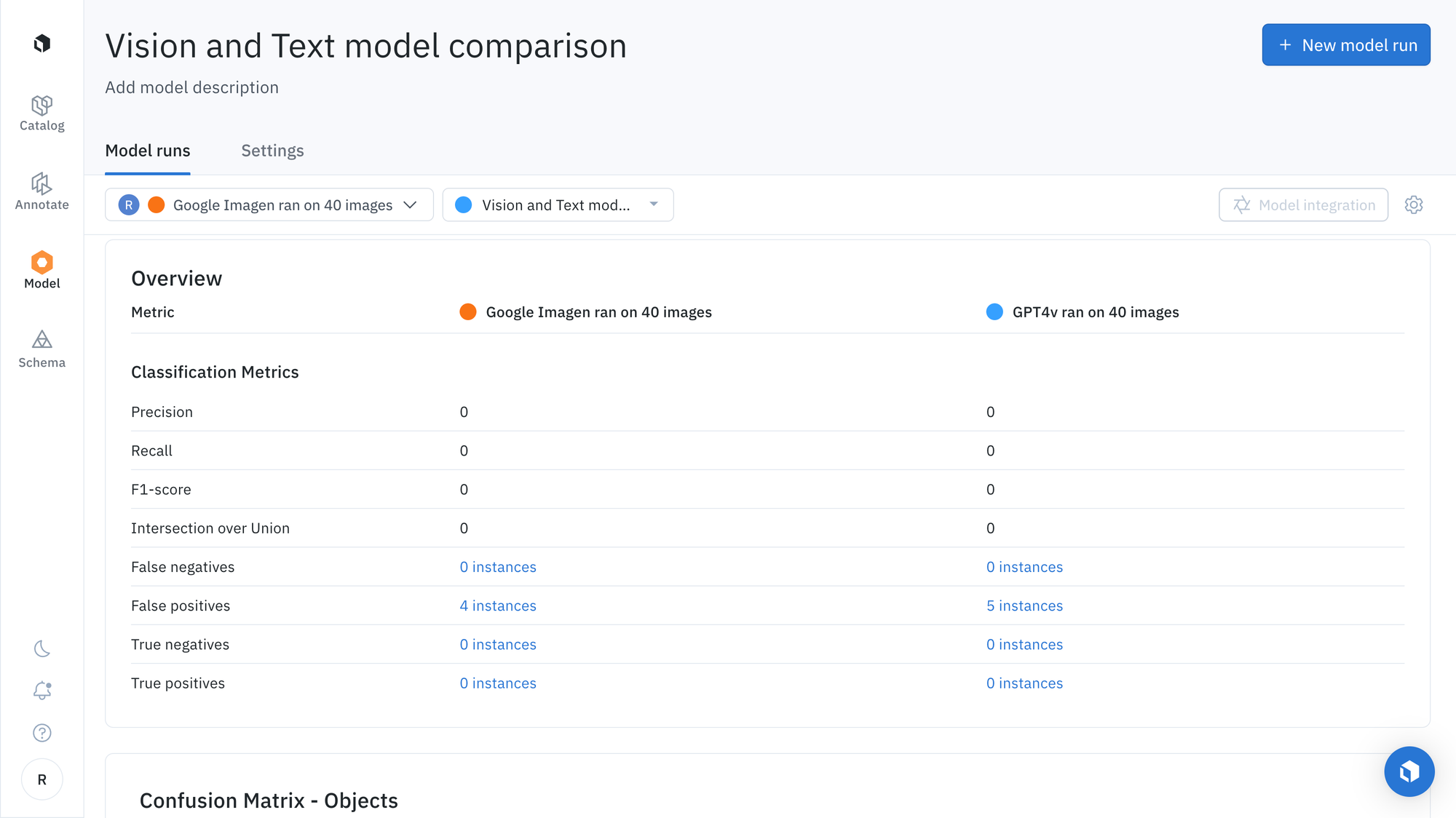

Imagen vs GPT-4V

Quantitative comparison

Imagen has 6 more true positives and 1 less false positive than GPT-4V, so Imagen is the best model in this test of 40 images based on the metrics.

Qualitative comparison

In the above example, GPT-4V and Imagen both correctly predicted there to be a swimming pool.

In the two instances above, GPT-4V and Imagen both correctly predicted there to be no obvious swimming pool.

Send the predictions as pre-labels to Labelbox Annotate for labeling

Since we've evaluated that Imagen is the best model from our test, we can send model predictions as pre-labels to our labeling project by highlighting all data rows and selecting "Send to annotate".

In conclusion, you can leverage Model Foundry to not only select the most appropriate model to accelerate data labeling, but to automate data labeling workflows. Use quantitative and qualitative analysis, along with model metrics, to surface the strengths and limitations of each model and select the best performing model for your use case. Doing so can help reveal detailed insights, such as seen in the above comparison between Imagen and GPT-4V. In the above model comparison example, we can see that Imagen emerged as the superior model that will allow us to rapidly automate data tasks for our given use case.

Model Foundry streamlines the process of comparing model predictions, ensuring teams are leveraging the most optimal model for data enrichment and automation tasks. With the right model, teams can easily create pre-labels in Labelbox Annotate – rather than starting from scratch, teams can boost labeling productivity by correcting the existing pre-labels.

Labelbox is a data-centric AI platform that empowers teams to iteratively build powerful product recommendation engines to fuel lasting customer relationships. To get started, sign up for a free Labelbox account or request a demo.

All guides

All guides