How to harness AI for efficient video labeling

Introduction

Working in collaboration with numerous leading companies in artificial intelligence, we're observing a surge in enthusiasm for using advanced models to initially label data, integrating human expertise later to refine and tailor previously labor-intensive and time-consuming tasks.

These AI models are transforming one of the most daunting tasks in machine learning—the creation of high-quality video datasets. Utilizing such models allows machine learning teams to leverage automated tools to pre-label or enrich data, facilitating a range of applications from monitoring driver behavior to detecting objects in manufacturing environments.

This blog post will explore how models like Gemini 1.5 Pro, Grounding DINO, and SAM are redefining the video labeling landscape and boosting efficiency and speed.

By automating the labor-intensive labeling tasks, these models not only accelerate the workflow but also liberate time for users and decrease labeling costs.

Steps

Step 1: Select video

Workflow of selecting video of interest

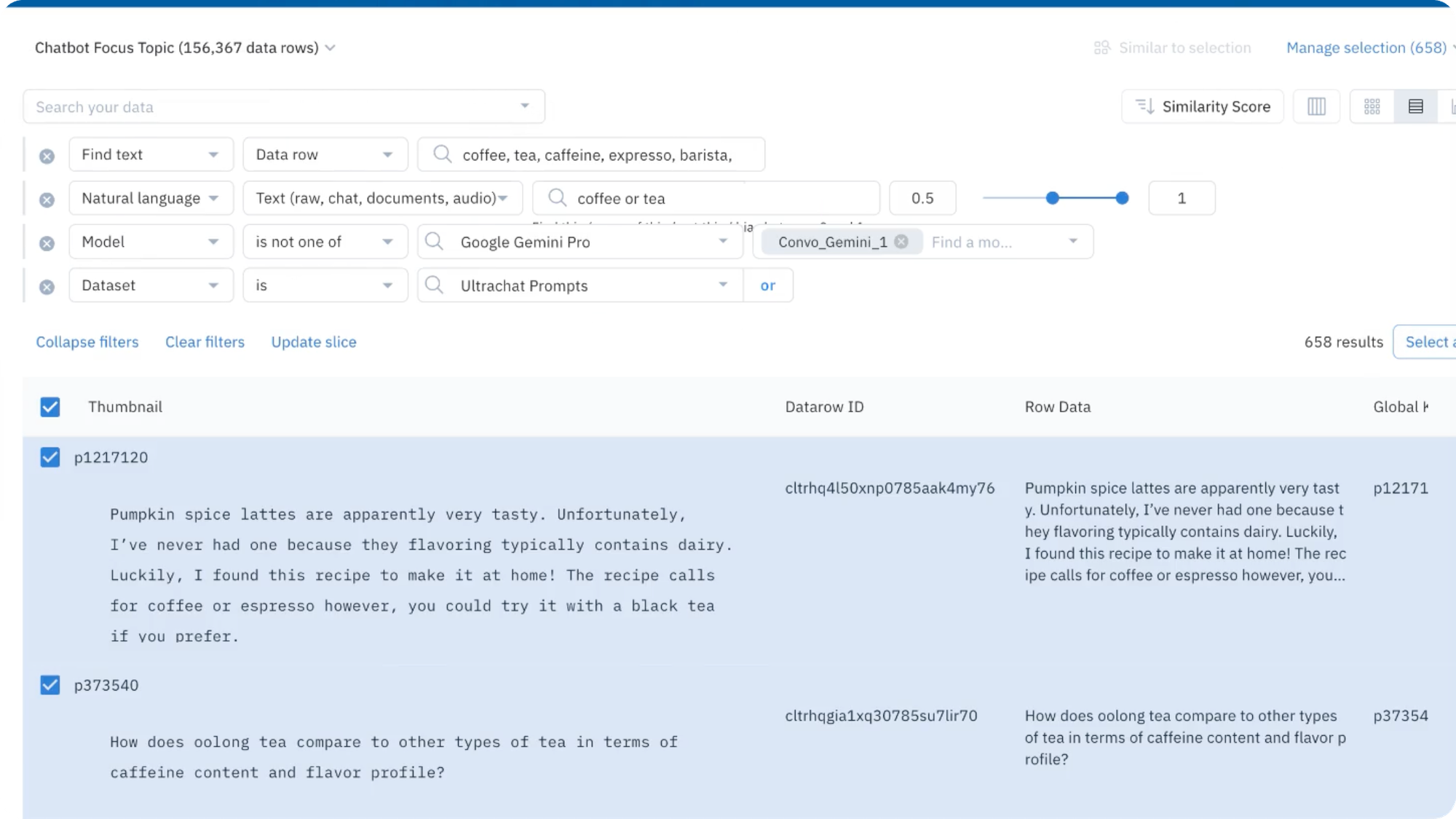

- To narrow in on a subset of data, users can use Labelbox Catalog filters, including media attributes, a natural language search, and more, to refine the images on which the predictions should be made.

- Users can click “Predict with Foundry” once the data of interest is selected.

Step 2: Choose a model of interest

Workflow of model of choice

- Users will then be prompted to choose a model of interest for a model run.

- Select a model from the ‘model gallery’ based on the type of task - such as video classification, video object detection, and video segmentation.

- To locate a specific model, users can browse the models displayed in the list, search for a specific model by name, or select individual scenario tags to show the appropriate models available for the machine-learning task.

Step 3: Configure model settings and submit a model run

Once the model of interest is selected, users can click on the model to view and set the model and ontology settings or prompt.

- Each model has an ontology defined to describe what it should predict from the data. Based on the model, there are specific options depending on the selected model and your scenario. For example, you can edit a model ontology to ignore specific features or map the model ontology to features in your own (pre-existing) ontology.

- Each model will also have its own set of settings, which can be found in the Advanced model setting.

- Users can generate preview predictions on up to five data rows to understand how the current model settings affect the final predictions.

While this step is optional, generating preview predictions allows users to confirm the configuration settings confidently:

- If users are unhappy with the generated preview predictions, they can edit the model settings and continue to generate preview predictions until they're satisfied with the results.

- After users are satisfied with the preview predictions, a model run can be submitted.

Step 4: Send the images to Annotate

Workflow of configuring the batch and click “Submit”

Users can transfer the results to a labeling project using the UI via the "Send to Annotate" feature. Labelers can then quickly review labels for accuracy.

Pre-labeling Use Cases

Example 1: Segmentation mask using Grounding DINO + SAM

Segmentation masks are used for autonomous vehicles, medical imagery, retail applications, face recognition and analysis, video surveillance, satellite image analysis, etc. Masks are some of the most time-consuming annotations to make for video. Below, we see an example of how this can be automated with Grounding DINO + SAM so the reviewers can make small edits if needed instead of starting from scratch.

Example 2: Bounding box using Grounding DINO

Bounding boxes are utilized in similar scenarios as segmentation masks, but these scenarios demand less precision than those requiring pixel-level (masks) detail. Bounding boxes can be automated using Grounding DINO, as illustrated below with detection of a person in video.

Example 3: Global classification using Gemini 1.5 Pro

Global classification for video is used when the overall classification for video is required like when a driver safety system needs to detect if a driver is distracted. Gemini 1.5 Pro can analyze an hour long video and provide answers about events that took place in the video. This automation reduces the need for human intervention, allowing personnel to focus on reviewing videos only when they are flagged with specific classifications as shown below.

Example 4: Frame based classification using Gemini 1.5 Pro

Frame-based classification is utilized in scenarios similar to segmentation masks. Gemini 1.5 Pro can analyze an hour-long video and identify the specific timestamps for a particular event. Below is an example that verifies whether the driver is distracted on each frame.

Additional considerations

Additional considerations as users incorporate Foundry labels into their projects and workflows:

- To produce frame based binary classifications in Gemini 1.5 Pro, users are recommended to experiment with the prompt and provide as much context as possible to get the best results.

- For example, the following yields better results:

- Users can also A/B test different models from Foundry to find the model that best fits the use case using Labelbox Model.

- If we do not support your use case or any questions arise, feel free to contact our support team, as we would love to hear your feedback to improve Foundry.

Conclusion

Annotating video data has traditionally been a tedious and time-consuming task. The integration of advanced AI models from Labelbox Foundry into the video labeling process marks a significant transformation in how video data is annotated. By leveraging Foundry's capabilities, users can drastically speed up their video labeling projects. This acceleration not only diminishes the time required to bring products to market but also substantially reduces the costs involved in model development.

Check out our additional resources on how to utilize state-of-the-art AI models in Foundry, including using model distillation and fine-tuning to leverage the power of foundation models:

All guides

All guides