Labelbox•March 24, 2025

Updated Labelbox Leaderboards: Kokoro, Imagen 3, AWS Nova Pro on the rise

We're thrilled to announce updated benchmarks and new model evaluations across all four of our GenAI model leaderboards. This latest refresh highlights remarkable advancements in AI technology, with the introduction of powerful new models such as Kokoro, Tencent Hunyuan, Imagen 3, OpenAI o1, and AWS Nova Pro that push the boundaries of what’s possible in artificial intelligence.

New models integrated across image generation, speech generation, video generation, and multimodal reasoning

The updated leaderboards include the most cutting-edge models in the AI industry. The additions across various categories now include:

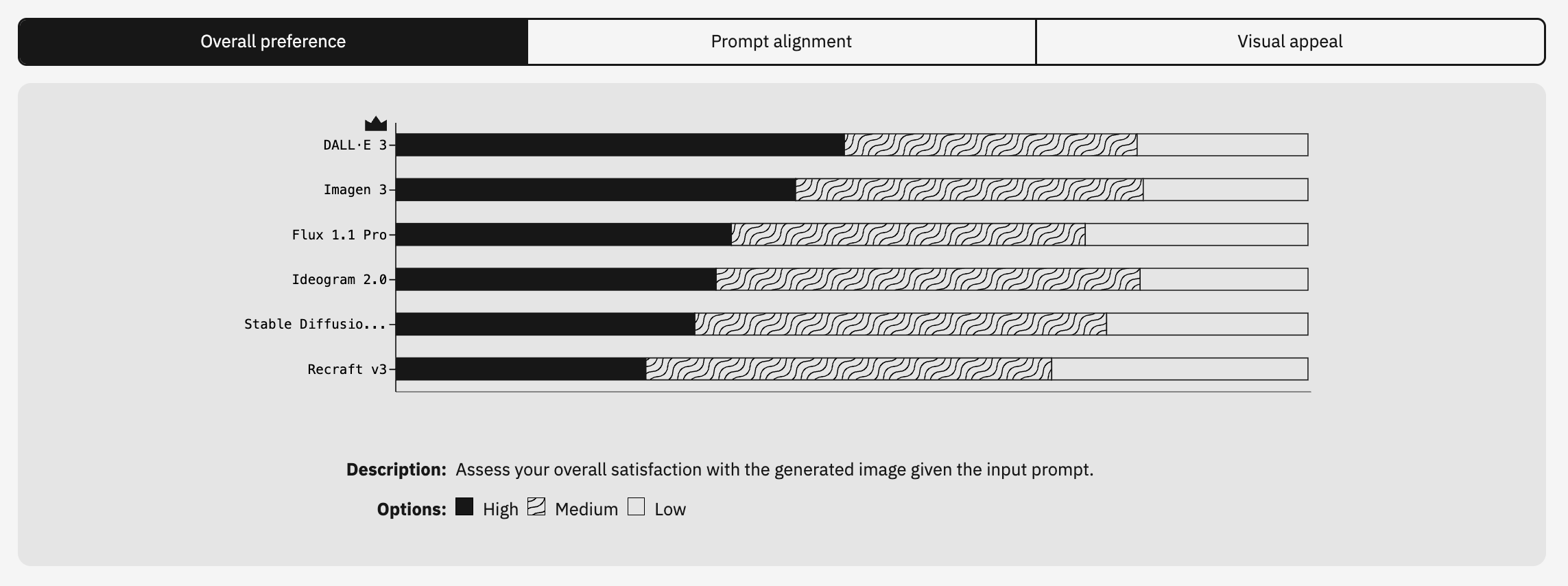

- Image generation: Imagen 3 and Recraft v3 join the rankings, offering exciting new possibilities for image synthesis.

- Speech generation: Kokoro and XTTS-v2 have been integrated into our evaluations, adding fresh perspectives to the world of speech synthesis.

- Video generation: Tencent Hunyuan and Luma Ray 2 are now part of the leaderboard, reflecting the ongoing advancements in video content creation.

- Multimodal reasoning: O1, AWS Nova Pro, and Gemini 2.0 Flash are now featured, demonstrating the power of models that work across multiple modalities (such as text, image, and video).

These new models not only represent the cutting-edge of AI technology, but they also introduce fresh competition, making our evaluations even more dynamic.

Enchanced leaderboard methodology

Our approach to model evaluation continues to rely on independent human evaluators and is grounded in precision, transparency, and reliability. Our methodology not only measures the technical performance of AI models but also reflects their real-world capabilities and usefulness across a wide range of tasks, all rated by a diverse group of real people.

We’ve enhanced our methodology across the board to ensure that each model is assessed thoroughly, these updates include:

- Annotator-driven rankings: Human annotators play a key role in comparing and ranking outputs based on multiple parameters such as coherence, creativity, spatial accuracy, and contextual alignment. This helps ensure that each model’s strengths and weaknesses are properly ranked. We continue to enhance and refine our recruitment of highly-skilled evaluators as well as focus on building a diverse team to provide an unbiased preference evaluation of each model’s capabilities.

- Standardized evaluation criteria: To maintain consistency, we rely on rigorously tested metrics across all categories, ensuring that each model is measured by the same standards. While we still use Elo rating as the standard for our evaluation, we’ve also added human preference evaluation as an alternative evaluation criteria.

- Increased rigor: Alignerr, a community of subject matter experts from several disciplines operated by Labelbox, has expanded to handle increasingly complex multimodal evaluations. This allows for deeper, more accurate assessments of how these models perform across different tasks and contexts. We updated the rating criteria and updated the task ontologies to capture a deeper analysis of each model.

Analyzing model performance: Massive improvements in image generation, speech generation, video generation, and multimodal reasoning

Overall the new models evaluated in this round of leaderboard updates delivered mixed results across the different categories. Despite being new, the recently released or updated models did not automatically jump to the top of our leaderboards, indicating that older models are robust enough to stand the test of time or still have a strong base of foundational model training.

The highlights from our evaluations of new models include:

Image generation: Imagen 3 debuts at the top position with a Trueskill rating of 1059.11 while DALL-E 3 takes second place with 1031.65. Recraft v3 ranks in the last spot, facing tough competition.These results were influenced most by human preference evaluation, where Imagen 3 scored highest according to our Alignerr team when asked to assess the overall satisfaction with the generated image given the input prompt.

Speech generation: ElevenLabs maintains its top position with an Elo rating of 1254 while new model Kokoro sits squarely in the middle at 968. The other new model, XTTS-v2 earned an Elo rating of 694 in its debut on our leaderboards. The speech generation models were evaluated on their ability to understand contextual information, how correctly it was able to recognize pronunciation accuracy, and its ability to produce natural sounding speech.

Video generation: Luma Ray 2 and Tencent Hunyuan emerged strong, securing second with an Elo rating of 1152.5 and third positions with an Elo rating of 1035.55 respectively. For this category, models were evaluated how well the input prompt was represented in the output video content.

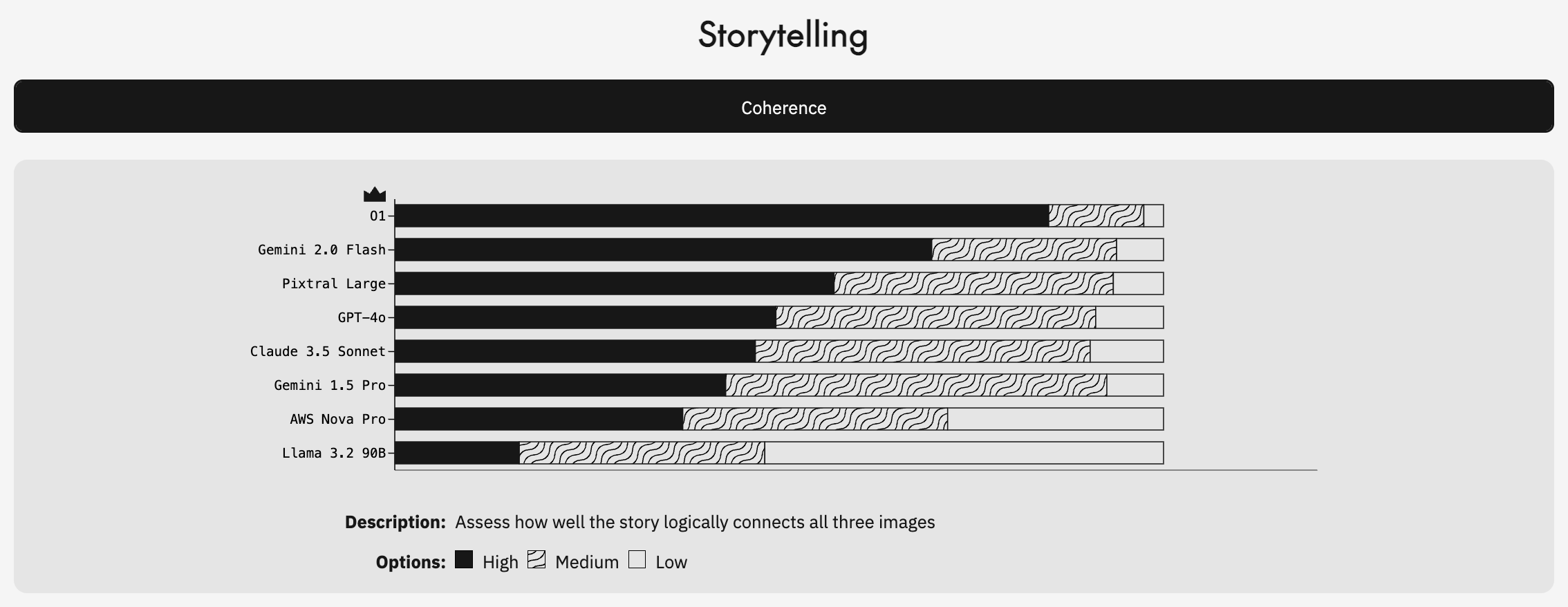

Multimodal reasoning: For this category, equal weight and evaluation criteria was given to the model’s ability to conduct logical storytelling, detect differences in images, generate image captions, and perform spatial reasoning.

- Spatial Accuracy: Claude 3.5 Sonnet leads, closely trailed by Gemini 2.0 Flash.

- Caption Creativity: Pixtral shines brightest, showcasing creativity, while O1 highlights that strong reasoning does not always translate to creative outputs.

- Image Differences: Pixtral tops the category, followed closely by newcomers O1 and Gemini 2.0 Flash.

- Storytelling: O1 and Gemini 2.0 Flash distinguish themselves, demonstrating substantial narrative capabilities.

We can see from these results that new models don’t necessarily outperform the old ones. Just because something is the latest version, doesn’t necessarily mean that it’s the best performing one.

There are a few reasons for this, the primary one being, older models, especially those that have been around for a while, tend to have undergone extensive fine-tuning and optimization over time. During this lifecycle, they are likely to have been improved through real-world feedback, bug fixes, and adjustments made by developers. These models might have become more reliable and consistent, as they’ve had more time to adapt to a wide range of tasks and edge cases. In contrast, newer models may still be in the early stages of this fine-tuning process, so while they may have strong potential, future evaluations will better reveal their trajectory and impact over time.

What's next: Reasoning leaderboard

Looking ahead, we’re excited to share that we will soon be launching a fifth leaderboard based on our unique human preference evaluations: the Labelbox Reasoning Leaderboard. This will provide rigorous evaluations of AI models’ mathematical and coding reasoning skills, offering deeper insights into how models handle complex logical tasks. Stay tuned for more on this in the near future.

Final thoughts on Labelbox Leaderboards updates

With new models constantly emerging, our human preference-driven leaderboards remain an essential benchmark for accurately assessing real-world advancements in AI. Even as new models are released, we can see that older models are able to stand the test of time and even outperform the new models based on our benchmarking criteria due to growing pains such as a lack of fine-tuning, higher computational demands, or struggles in specific task domains.

As we continue to update and refine these rankings, we are committed to keeping our community informed about the latest, most verified developments in artificial intelligence.

We look forward to bringing you even more insights as the field of AI continues to evolve at an exciting pace. If you have suggestions or want a specific model included in future assessments, let us know or contact us to learn more about how we can help you evaluate and improve your AI models across all modalities.

All blog posts

All blog posts