Labelbox•June 3, 2022

Why data quality is important for machine learning

The difference between a good AI product and a bad AI product is simple—the quality of the data it's trained on.

There are hundreds of real-world use cases that showcase examples of poor quality AI (such as chatbots misunderstanding customers) and the reason for failure is always the same—they weren’t trained with high-quality labeled data.

Feed a model low-quality data and you’ll get low-quality results. This leads to model errors that take extra time and effort to fix, a higher margin of error when the AI is making decisions, and a longer model training process. All of these factors cost your business unnecessary time and money.

In this article, we’ll guide you through why having high-quality data is important for machine learning, the actual impact and cost of low-quality data, and how to create high-quality data if you don’t have it.

Why high-quality data is important for machine learning

Machine learning models are only as good as the quality of the data they are trained on. A high-performing model cannot be created with data that is riddled with errors, contains duplicates, and other anomalies. Having high-quality data also:

- Accelerates the model training process: High-quality data means fewer errors in the model training process which leads to less time spent manually fixing errors.

- Decreases your margin of error: By default, it is very difficult and unrealistic to expect a machine learning model to be accurate 100% of the time, considering biases, variance in real-world data, and the iterative nature of model development. However, decreasing your margin of error and improving confidence in your model will help you deliver performant, robust, and trustworthy models faster.

- Allows models to handle more complex use cases: When you have low-quality data, your models will struggle with simple tasks, leading you to spend more time trying to build this foundation before being able to move on to more complex edge cases.

- Keeps costs to a minimum: The model training process can quickly become expensive when an AI project is consistently delayed due to fixing errors and data quality issues. Having high-quality data helps with cost control by ensuring that the model training process goes smoothly.

The impact of low-quality data

Issues in data quality affect every stage of the model training process. When AI teams fail to address these issues, the entire machine learning workflow is delayed, from end-to-end. Having low-quality data also results in:

- Poor experiences for the end user: If customer-facing AI products are trained on low-quality data, these products are going to end up causing the end user to have a poor experience and a negative impression of your business. Going back to the earlier example of AI chatbots, if a customer is trying to get support on a matter but an AI chatbot isn’t performing as it should, this could cause the customer to churn.

- Lost time and financial costs: Trying to fix mistakes retroactively in the model training process is much more time-consuming and complex than improving the quality of the data from the start. This leads to additional financial costs that arise when faced with fixing a high volume of model errors, as well as time lost re-training the model to validate these error fixes.

- Project abandonment: One of the worst-case scenarios when it comes to low-quality data in model training is project abandonment. If the model isn’t producing ideal results and it’s deemed too costly or time-consuming to fix, there’s a high chance that the project will be abandoned.

Minor problems in the input data going into training a model can quickly turn into large-scale issues during the output. Issues in data quality must be addressed early on in the model training process to avoid these potential complications.

How to create high-quality data for machine learning

Improving the quality of training data, however, can mean something different for every use case, model, and even iteration cycle. However, there are typically three ways to improve the quality of your data for model training.

The first is enhancing your data annotation pipeline, the second is observing your model in the training stage to better understand its specific needs, and the third is by expanding your dataset.

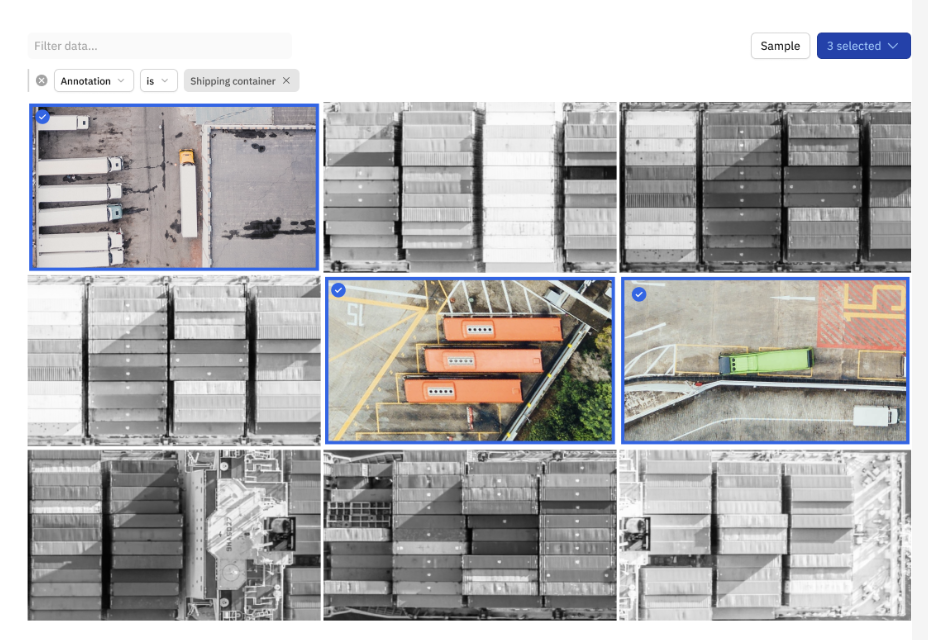

The video example below shows the first and most basic way to improve model performance—finding and fixing labeling errors in your data.

Each data annotation team is unique, and due to biases and natural human error, it’s not uncommon for there to be a handful (or more) of labeling errors in any dataset. The first step to improving your machine learning models is by finding these errors and then sending them to be corrected.

With a tool like Labelbox Model, once you upload your model predictions and model metrics, you can unlock powerful workflows to label high-impact data, faster and more efficiently. You can easily surface labeling mistakes by visualizing where ground truths and your model predictions agree or disagree. This not only helps speed up your labeling efforts and increases label quality, but can help reduce your labeling budget.

Final thoughts on data quality for machine learning

Machine learning models use training data to learn and make decisions. This data is arguably the foundation of how well a model is able to perform. Regardless of how you try to improve the model, if you don't have high-quality data from the very start, you'll quickly hit limitations in model performance.

Labelbox is a best-in-class AI data engine that helps you identify and fix errors in your data as well as test and improve your model to get to performant AI, faster. Download the complete guide to data engines to learn more.

All blog posts

All blog posts