Manu Sharma•July 24, 2019

The Side of Machine Learning You’re Undervaluing and How to Fix it

Matt Wilder, PhD (matt@wilderai.com) Matt has over 5 years of experience building computer vision applications across a wide range of domains. Through his company Wilder AI, he helps customers develop robust solutions to unique and challenging computer vision problems. His experience working with a variety of companies has brought him face-to-face with many complex, real-world datasets.

Development of machine learning models gets stunted by a lack of long-term thinking and strategic planning.

“The fact that there aren’t more people knocking down the gates demanding commercial labeling tools is a sign that not many people are using vision models in production. Because as soon as you start to use vision models for something real, you need better labeled data, a process, and all of this core infrastructure.”

Pete Warden, Staff Research Engineer at Google

There are two sides to any machine learning project. And while I wouldn’t say I’m equally passionate about both, I’m a pragmatist and recognize the criticality of each. These sides are the machine learning model and the labeled training data (training, validation, and test sets).

Think of these two sides as a car and its fuel. You can’t get across town if you have a can of gas but no car, and you can’t drive a car on an empty tank. You need both if you plan to get to your destination.

In machine learning, you need an effective model (the car) and high-quality training data (the fuel) to achieve your outcome. Both sides are essential. Despite this fact, the training data side of the equation is continually undervalued to the detriment of many machine learning projects. How can this hold a project back?

The Most Hyped Challenge in Machine Learning: Self-Driving Cars

In an article last fall, CB Insights identified 46 companies that are trying to crack the self-driving car problem. Machine learning is playing a critical role in this.

While I can’t say for certain that Tesla is leading the race toward building a self-driving car, I can say that they’ve created the ultimate data-collection machine (equipped with radar, 8 cameras, sonar, and GPS, all simultaneously capturing data). This setup allows Tesla to collect huge amounts of training data while simultaneously enabling their self-driving AI to shadow a human driver and learn from the decisions they make.

The point is, they didn’t treat training data as an afterthought. They made it an integral part of their plan, and this decision has allowed them to iterate their self-driving AI quickly and develop a functional system faster than many of their competitors. What stops more people from taking this approach?

Training Data = I’ll Worry About It Later

“When embarking on building an AI system, forget about the machine learning model component and think about Wizard of Oz-ing it at first. Have a person behind the curtain who’s implementing the machine learning model in their brain. This allows you to iterate more quickly on the product and nail down the actual requirements for the model. By executing this process, you invariably create an initial labeled dataset that gives you a manual and rules for labelers to follow as you scale training data to support the production model.”

Pete Warden, Staff Research Engineer at Google

The tendency to undervalue training data isn’t a baseless decision that developers and data scientists conscientiously take. It’s something that happens as a result of multiple factors coming together. Here are three of the main culprits that make us treat training data as an afterthought:

- Mindset: You entered your field to solve problems. This is creative work that is highly engaging to technically-minded people. As a result, working on machine learning models will be far more appealing to you, and by default, training data will take second place.

- Advances: In the AI literature, most of the exciting advancements are focused on the machine learning methods. As a result, it’s easy to fixate on applying the latest, greatest techniques to your problems and let the important dataset management issues fall to the wayside.

- Chores: No one drools over a picture of a new gas formula, they drool over the sports car. When compared with the creative work, the idea of overseeing, curating, and labeling training data can feel like a chore.

These factors can have a serious impact on our personal and organizational priorities. They lead to training data becoming the forgotten component in any machine learning project. That thing that you need to worry about, but not right now.

What’s the effect of treating training data this way?

The Role of High-Quality Training Data

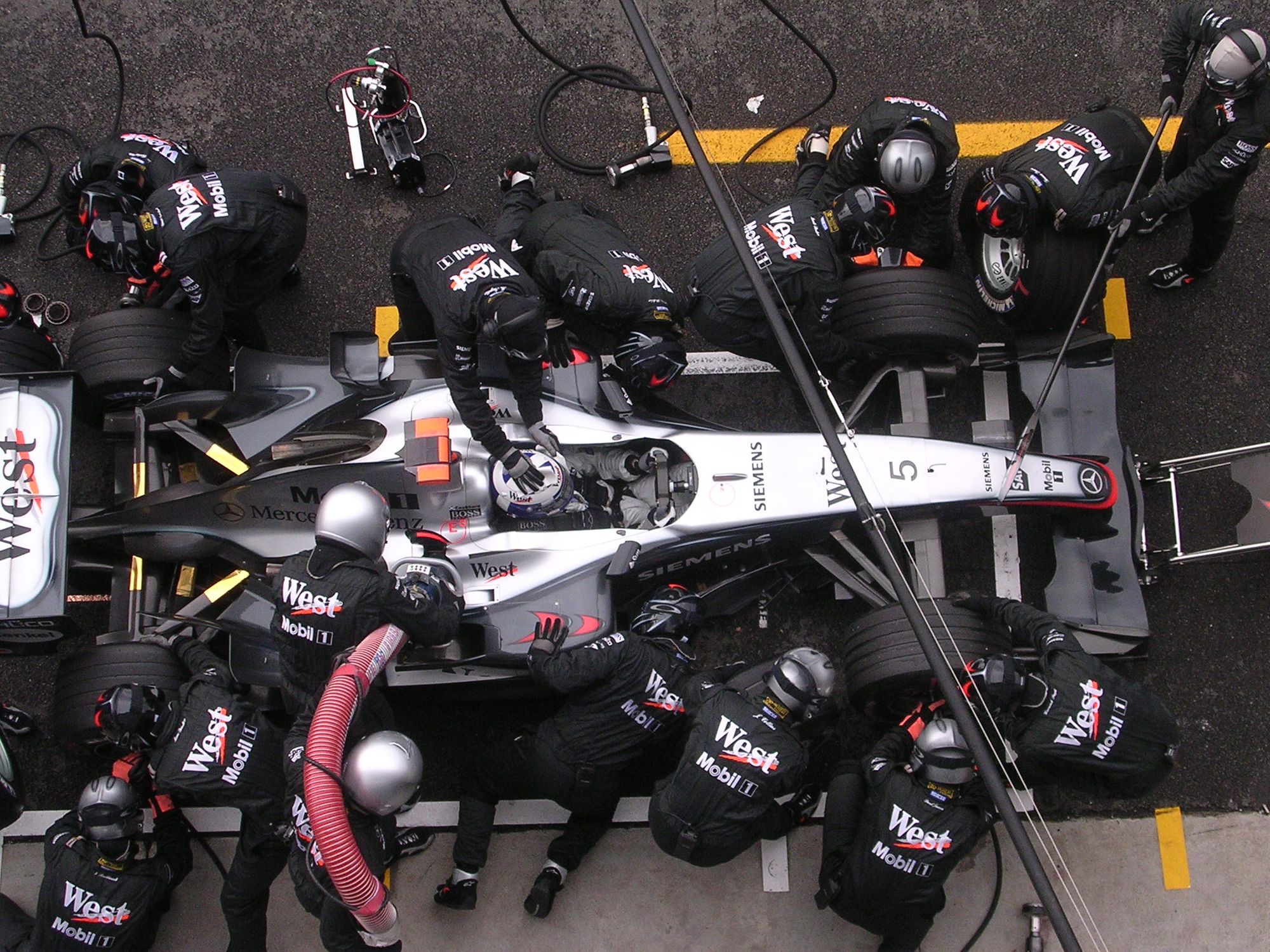

Let’s go back to the car example for a minute. Imagine that an F-1 race car was to fuel up with lower quality fuel right before an important race. What would the effect be? Over the course of the race, the fuel will degrade the parts, hinder the car’s performance, and could even result in catastrophic failures.

It doesn’t matter how state-of-the-art the car is; bad fuel will cause it to underperform. The same logic applies to your machine learning model. The quality of your training data will affect the performance of any machine learning model, no matter how revolutionary it may seem to be.

I’ve seen many organizations lose valuable time because they didn’t take training data seriously from the start. This means less time problem-solving and developing models, and more time gathering datasets and validating their original model. But, when you take your training data seriously, it means you hit the ground running, validate your initial machine learning model, and then go back and iterate your problem-solving work.

Building a well-oiled data generation and labeling system also allows you to generate new datasets quickly. This enhances the agility of your machine learning team and allows for a more thorough exploration of the problem you plan to solve.

To see a project through and have high-quality data at every juncture, you need to shift your mindset.

How to Improve Your Training Data From Start to Finish

Training data is an operational task. It requires foresight, planning, and a clear end result. This means that improving your training data is relatively simple when compared with working on your machine learning model. These are the three things that I recommend you do if you want to improve your training data:

- At the start of the project, try to envision a data labeling framework that will support producing hundreds of thousands of records.

- Seek out tools and platforms that support annotation at scale.

- Design a forward-thinking annotation ontology, but don't let this paralyze the process.

By taking these steps, you’ll ensure that your project has the fuel it needs to achieve a meaningful impact. You’ll also free your time to focus on improving your machine learning model and more fully explore the problem space.

All blog posts

All blog posts