How to maintain quality and cost with advanced analytics

A strong data engine involves the creation of large volumes of high-quality training data. Delays from quality management or the lack of metrics and insight into labeling quality, throughput, and efficiency during the labeling process can significantly hinder model development.

In order to maximize efficiency and cost while ensuring labeling quality, ML teams require strong data management, quality and performance monitoring, and advanced techniques to improve the speed and efficiency of their labeling operations.

Get full transparency with labeler and annotation analytics

AI team managers and administrators are able to track live analytics throughout their labeling projects to monitor quality, throughput, and efficiency. With Labelbox, teams can drill further into metrics on individual labeler progress and performance on labeling time, review and rework time, total time spent, and more.

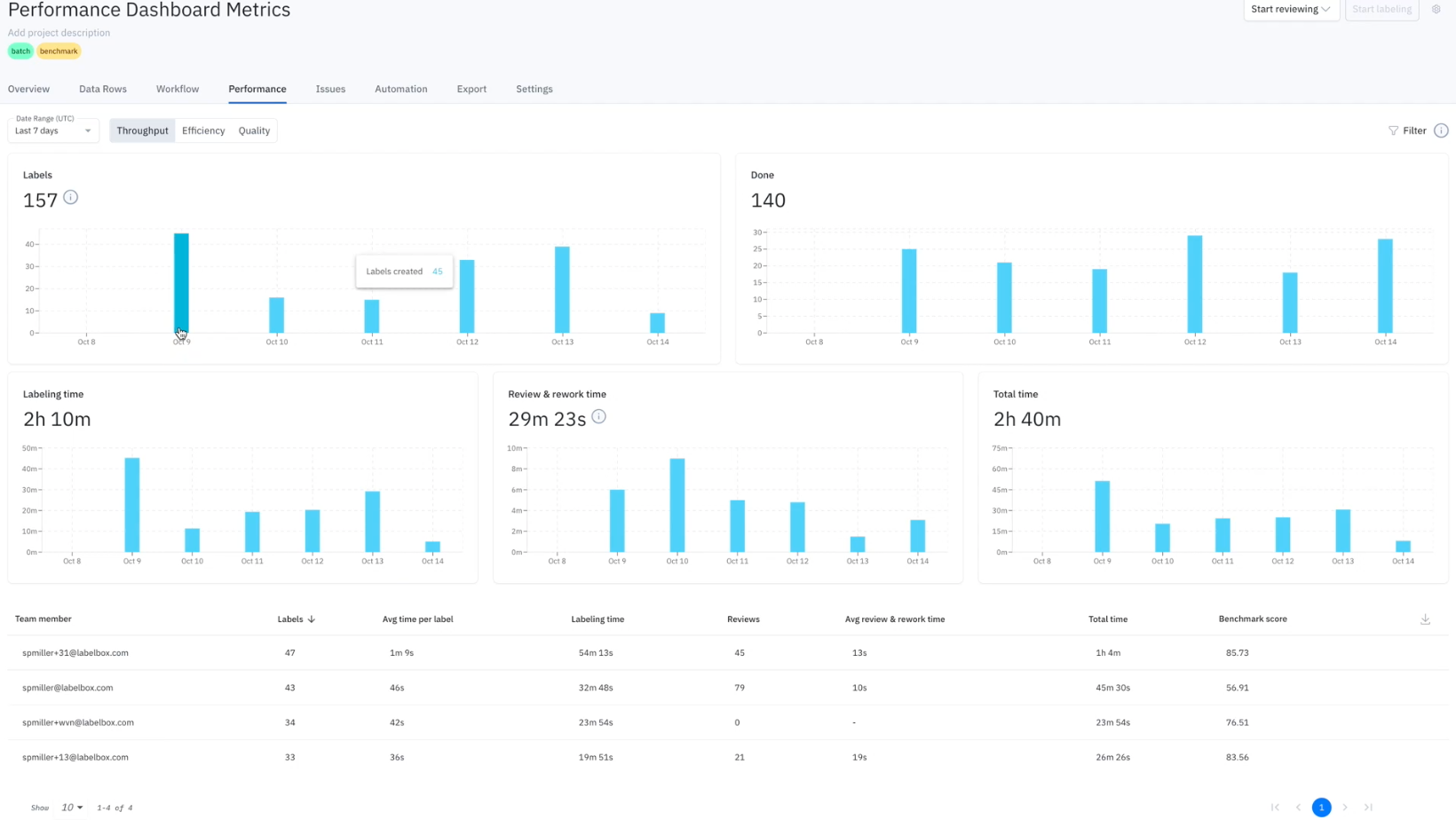

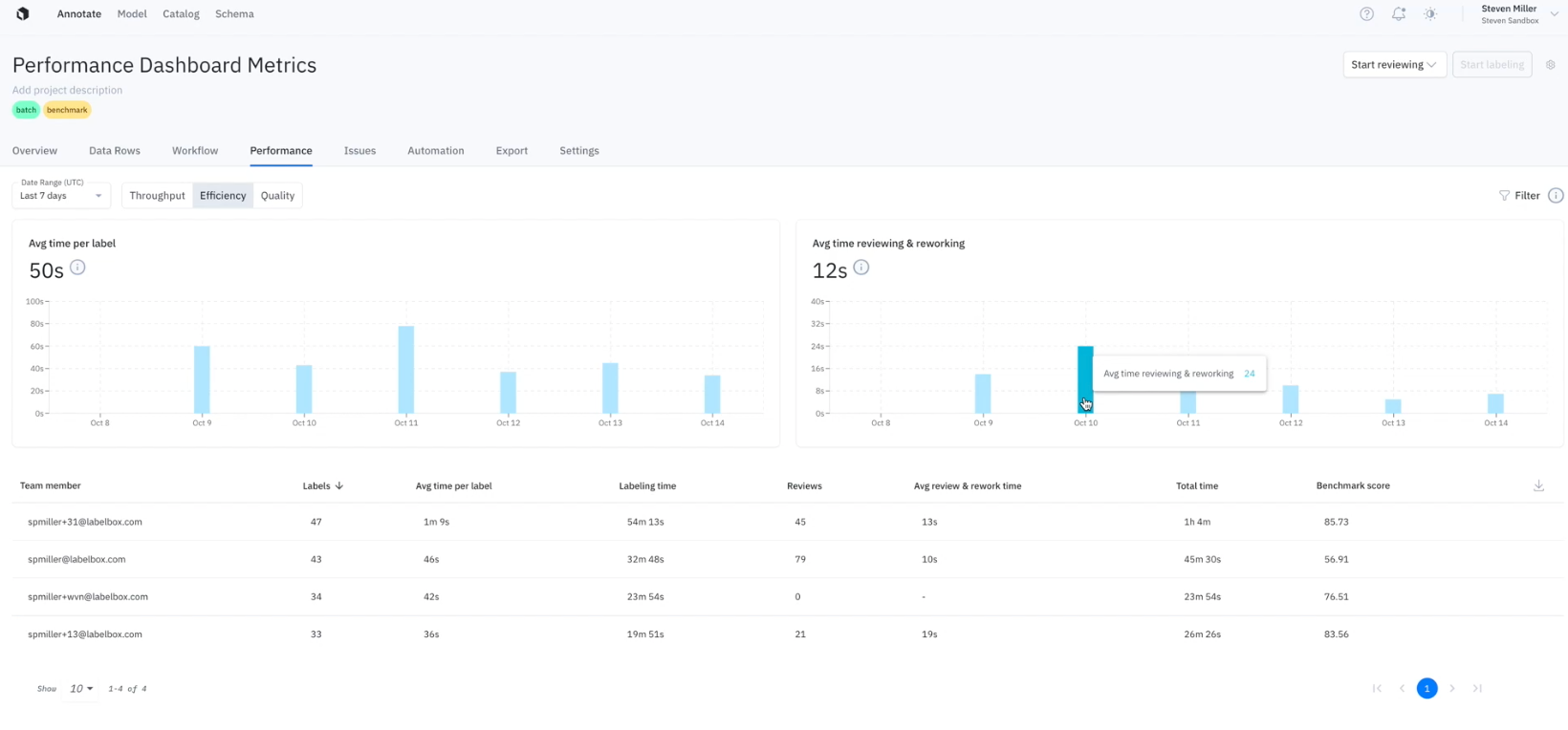

Project performance dashboard

As one of the primary tools for managing your labeling operations in a Labelbox project, you have a holistic view of your project's throughput, efficiency, and quality throughout the labeling process.

Throughput

The Throughput view provides insight into the amount of labeling work being produced. It can help provide answers to questions such as:

- How many assets were labeled in the last 30 days?

- How much time is being spent reviewing labeled assets?

- What is the average amount of labeling work being produced?

Efficiency

The Efficiency view provides insight into the time spent per unit of work. It can help provide answers to questions such as:

- What is the average amount of time spent labeling an asset?

- How can I reduce time spent per labeled asset to be more efficient?

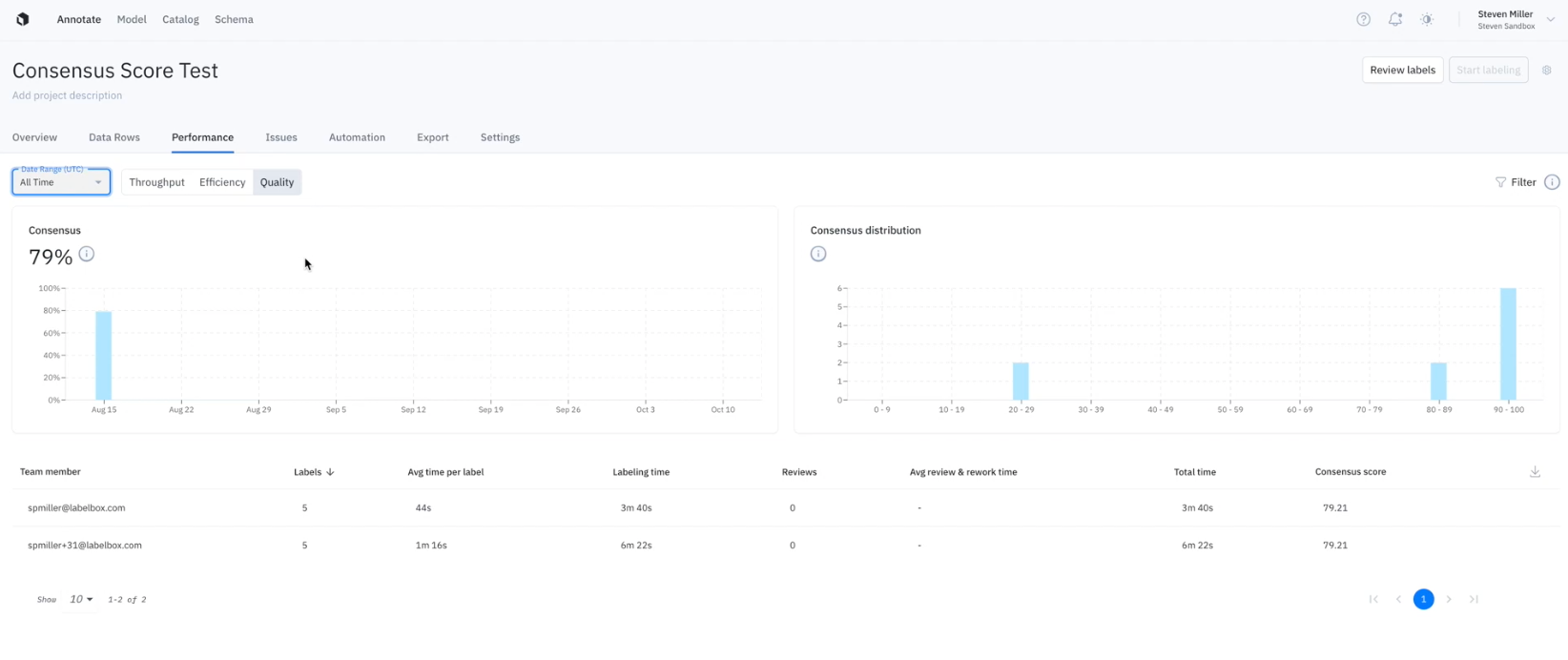

Quality

The Quality view provides teams with insight into the accuracy and consistency of the labeling work being produced. It can help provide answers to questions such as:

- What is the average quality of a labeled asset?

- How can I track and ensure label quality is more consistent across the team?

Each of the above metrics are reported at the overall project level and at the user level, giving you a single source of truth for your project's annotation and labeler analytics.

Learn more about the project performance dashboard in our documentation.

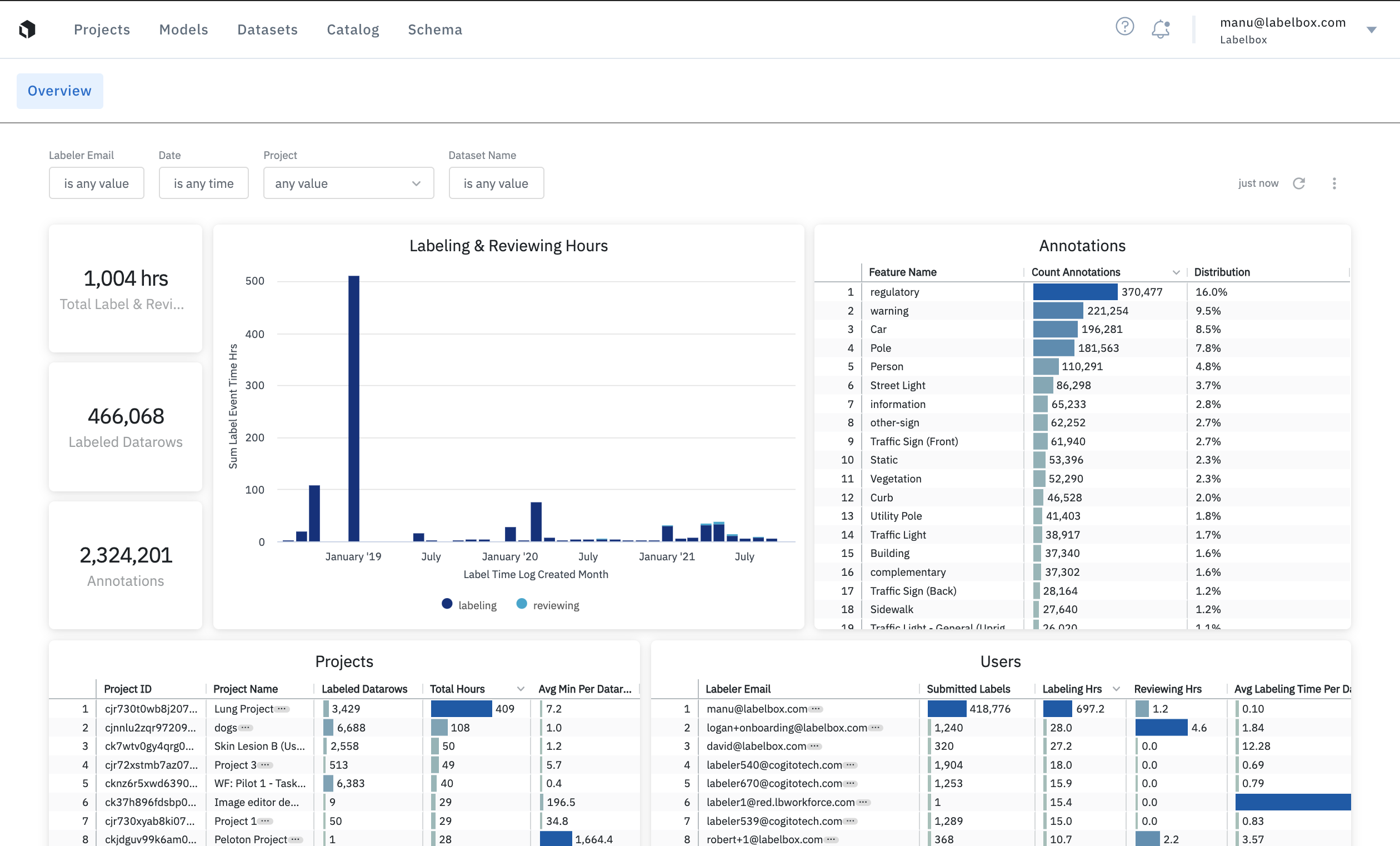

Advanced analytics for Enterprise teams

For larger Enterprise teams, Labelbox provides advanced analytics to better track labeling time and spend.

- A global view of your entire labeling operations across all Labelbox projects.

- Filter for specific metrics (e.g labeler email, date submitted, project, or dataset) to better manage cost & quality.

- Interact with and customize your data for maximum insights and schedule detailed reports.

Learn more about advanced analytics for Enterprise teams in our documentation.

All guides

All guides