How to curate and version your training datasets and hyperparameters

It's important for teams to be able to manage their model input and outputs in a single place. Being able to understand and visualize how different models compare to each other is a crucial aspect of a successful data engine.

Try the Colab: Google Colab Notebook

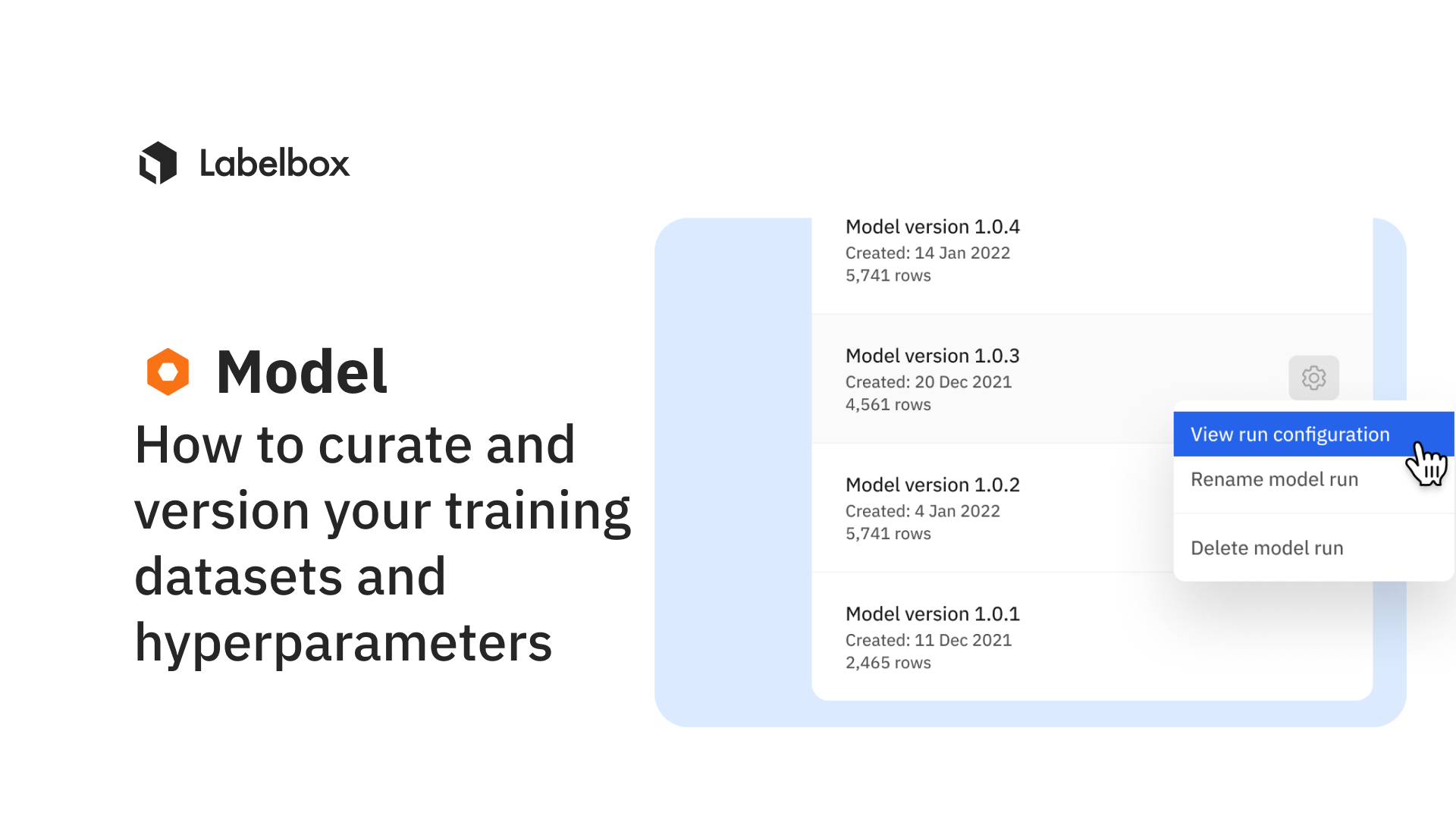

Rather than flying blind, teams can use Model to configure, track, and compare essential model training hyperparameters alongside training data and data splits. You can easily track and reproduce model experiments to observe the differences and share best practices with your team.

ML teams may want to kick off multiple model runs with different sets of hyperparameters and compare performance. Having a single place to track model configurations and performance is crucial to model iteration and for reproducing model results. Learn how you can:

- Create a model

- Create a model run

- Curate data splits

- Create and modify a model run configuration through our UI

- Create and modify a model run configuration through the SDK

- Use our Google Colab Notebook (as shown in the video) to curate and version your training datasets and hyperparameters

To learn more about model run configurations, feel free to visit our documentation.

All guides

All guides