How to enhance RAG chatbot performance by refining a reranking model

When building customized chatbots and similar LLM applications, teams may start off with RAG (Retrieval Augmented Generation) approaches. A RAG-centric application relies on context to formulate an appropriate response when given a user query. Because the context is retrieved from internal documents, RAG based approaches are adept at handling organization specific chatbots while minimizing hallucinations. A good example of how organizations are leveraging RAG centric applications is Metamate, an employee agent used at Meta which pulls internal information at request for answering employee questions, producing meeting summaries, writing code and debugging features.

While easy to prototype, optimizing RAG-based applications for relevant retrieval require complex considerations due to the potential semantic information that is lost when embedding internal documents within vector databases. Therefore, a useful RAG based chatbot requires careful strategies for preprocessing document metadata enrichment (e.g,. metadata filtering, domain specific embedding models, chunking, etc) as well as model inferencing LLM, prompt engineering, and re-ranking retrieved documents.

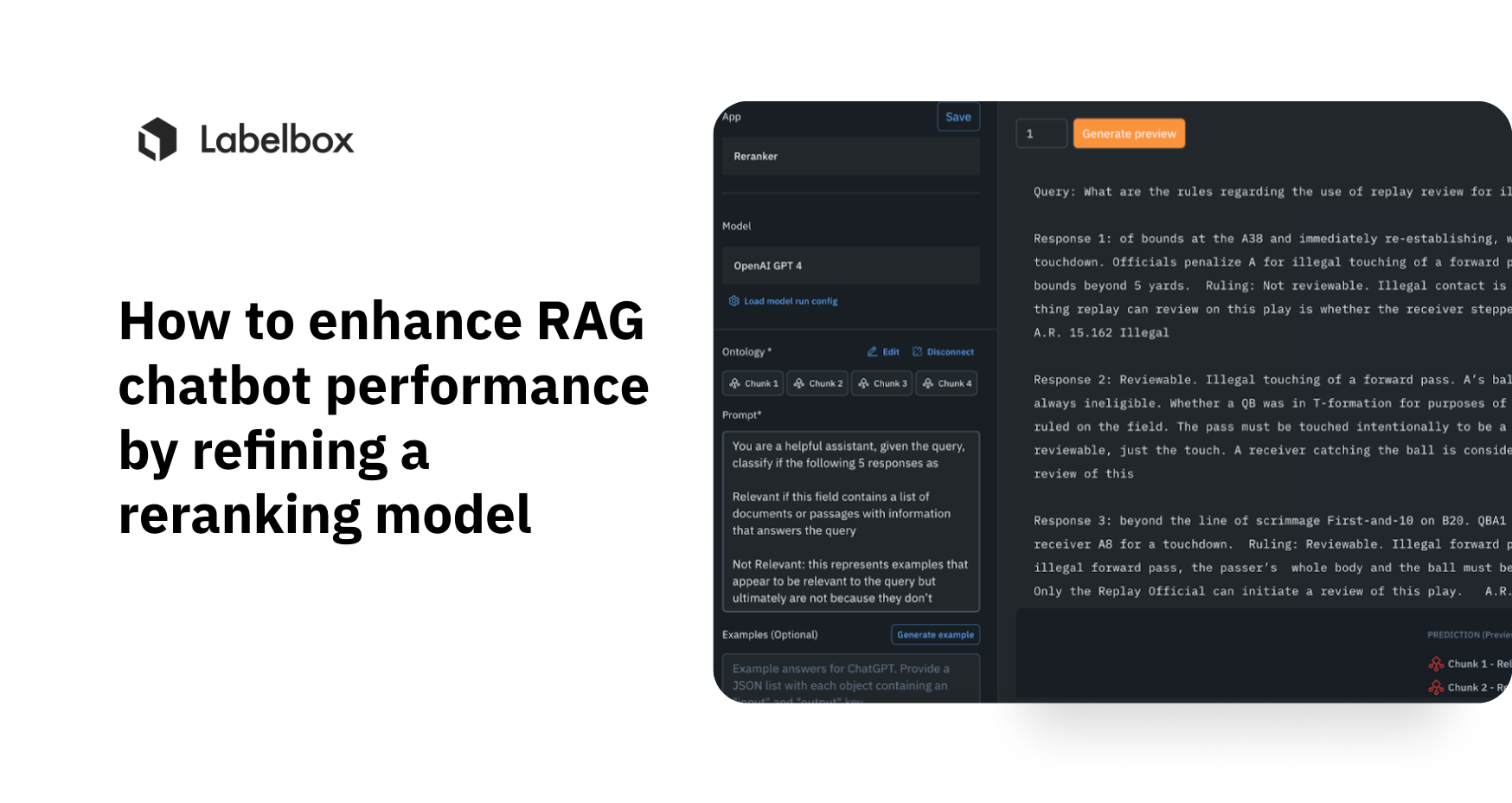

In this guide, we’ll cover how Labelbox can be a useful tool to help you preprocess documents (e.g., entity detection, document classification, etc) and improve retrieval relevance by fine-tuning a reranking model. Feel free to walk through the full guide or watch the step-by-step video below.

Why reranking?

When building RAG-based applications, thereʼs a limit to the amount of text that can be passed to an LLM and therefore the context given to an LLM is limited to the top k documents pulled from the vector space using a similarity search. Because semantic information may be lost during the embedding process, relevant context that may be useful to answering the user query may fall outside of the top k responses.

With reranking embedded within a RAG application, we now have the ability to consider a larger corpus of retrieved text and pass the most relevant documents into our LLM to generate a better answer.

Using the Labelbox Platform, we will fine tune an reranker model to improve chatbot performance. Holding other considerations consistent (i.e., token limit, embedding model, chunking strategy, LLM choice), we will compare baseline responses without reranking to the output of a fine-tuned reranker workflow.

Part 1. Review initial baseline responses

To illustrate this approach, we will attempt to improve responses to the 2022 NFL Rulebook 🏈.

Note: From the document, the document is divided into different sections. You can leverage the Labelbox Platform to label metadata for each page (i.e. Contents / Scenarios / Rules) to improve efficient and relevant retrieval.

The PDF is embedded using the HuggingFace sentence- transformers/distiluse-base-multilingual-cased-v2 model. We will keep the same vector embeddings for both workflows.

After extracting the top 2 documents (via similarity search), we will add them as context to the flan-t5-base LLM model to generate initial responses to queries about NFL rules.

As you can see from the sample of results below, the initial responses are spotty at best.

Part 2. Human in the Loop (HITL) and Semi-Supervised Approaches to Fine Tune Reranker Model

To fine tune our reranker model, let's extract 5 responses for each query and upload the prompt / responses(s) pairs to Labelbox Catalog.

Note: We can leverage unsupervised techniques such as semantic search, similarity search, cluster search, etc to enrich data rows with relevant metadata and/or bulk classification. The highlighted cluster shown below contains search queries related to flagrant penalties, which we can use to attach metadata & classifications.

Using Model Foundry, we can apply semi-supervised learning with foundation models to generate pre labels. Let’s select GPT4 and attach a customized prompt to generate responses to match the target ontology, in this case, whether the output is relevant or not relevant to the query.

By sending the query response pairs with pre-labels to our annotation project, human labelers can now leverage pre-labels (and/or metadata & attachments) to speed up the annotation process. Predictions are then converted to ground truth once the human-in-the loop process is completed.

To track model performance, we can set dataset versions and enable data splits before exporting the data rows via the Labelbox Python SDK.

Part 3. Fine Tuning the Reranker Model

For our workflow, we will fine tune the BAAI/bge-reranker-large open source model on HuggingFace 😊. Companies such as Cohere also provides reranking models as well for fine tuning.

To fine tune our model, we’ll need to format the ground truth labels into JSON line format.

Note: that the model was fine-tuned with AWS Sagemaker ml.g4dn.xlarge machine with T4 GPUs. The Google Colab environment also provides free GPUs for model fine tuning.

The model returns relevancy scores for each chunk. We can see that the relative order of the scores and not the absolute value is what matters. Applying this on a data row in the testing set yields the following:

We can see from the histogram distribution of relevancy scores for the testing set that relevant examples tend to have higher scores.

Part 4. Inference with an updated RAG workflow

Using the same document embeddings (sentence-transformers/distiluse- base-multilingual-cased-v2) and LLM (flan-t5-base LLM model), let's retrieve the top 20 document chunks for each query.

Using the fine tuned reranker model, let's rerank the top 3 documents which will be sent to the LLM as context.

Applying inferencing on the testing set, we can now compare the baseline response with the responses with reranking model. From here, we will see some markedly improved responses.

Conclusion

As we’ve shown in this guide, model performance for customized chatbots rely on both the quality and quantity of labeled data. By incorporating Labelbox Catalog and Foundry to apply pre-labels with unsupervised & semi-supervised techniques, we were able to maximize labeling throughput. Afterwards, by using Labelbox Annotate, we were able to leverage a human-in-the-loop workflow to ensure that our training labels are of the highest quality. Teams should consider adopting this approach when building RAG-based applications to better preprocess documents and improve retrieval relevance by fine-tuning a reranking model.

Labelbox is a data-centric AI platform that empowers teams to iteratively build powerful chatbots that fuel knowledge sharing and foster deeper customer interactions. To get started, sign up for a free Labelbox account or request a demo.

All guides

All guides