How to evaluate and optimize your data labeling project's results

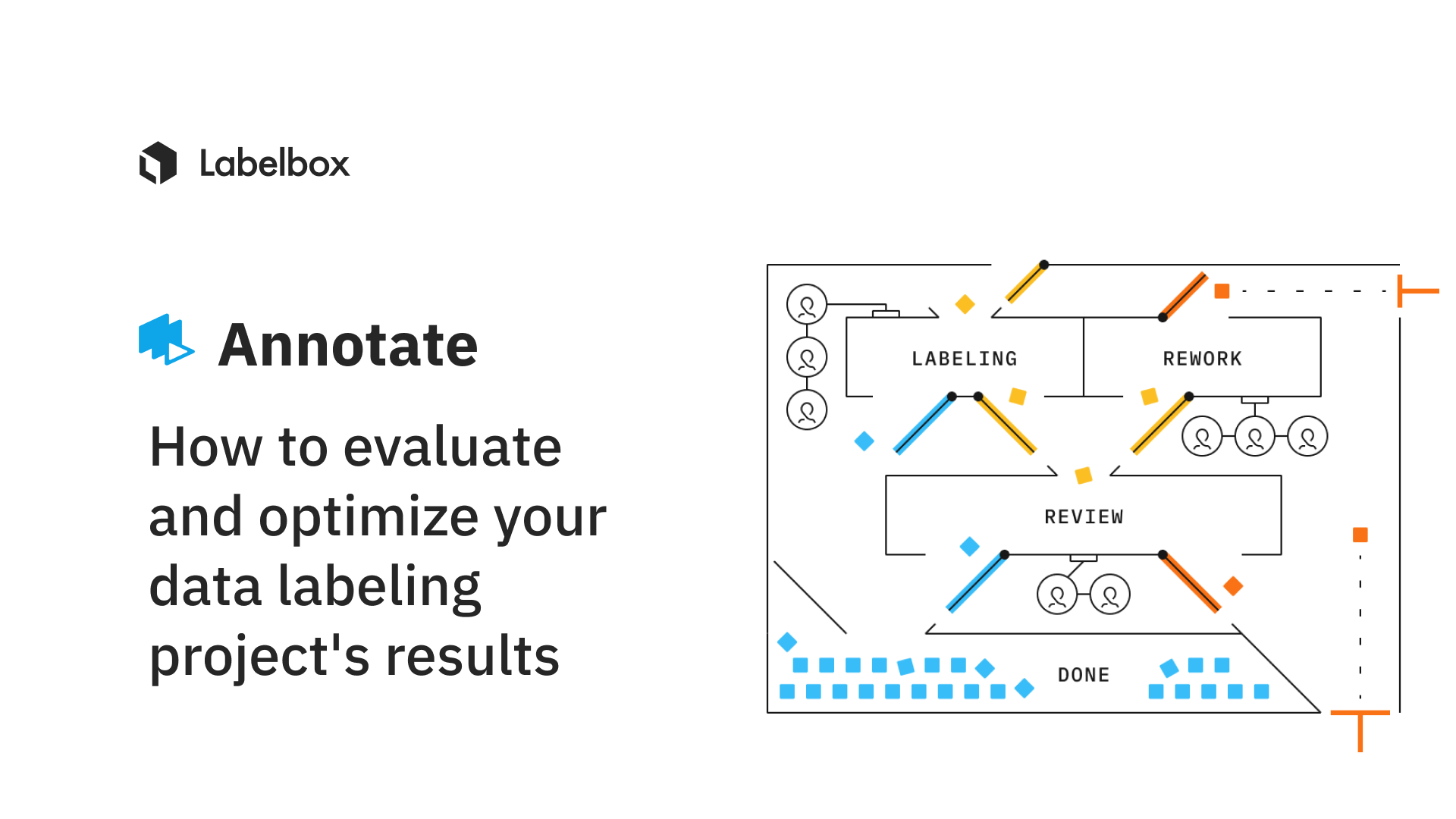

Leading machine learning teams establish high quality labeling workflows by starting with small iterative batches to develop an effective feedback loop with labeling teams early on in the process. This approach allows ML teams to quickly identify issues and make necessary changes to their labeling instructions and ontologies, enabling labeling teams to become more proficient with the task before large volumes of data are labeled. Following this waterfall approach, teams are able to generate higher quality labeled data quickly and at scale.

After a task has been completed and an ML team has entered into the production phase, it's important to evaluate and consider several factors that can guide you toward greater optimization of future batches.

What should ML teams consider once a labeling project is complete?

Feedback from labelers

After project completion, you should consider sourcing feedback from your labelers. Machine learning teams often benefit from asking labelers to share their thoughts on the given labeling task to better understand what was challenging, to discover edge cases, and receive suggestions on how to improve efficiency.

A great way to crowdsource this feedback is through Labelbox's issues & comments feature that can be used on individual data rows. This allows the labeling team and your ML team to collaborate and provide feedback on specific examples or assets. Updates within a project can also be used to provide more holistic feedback on the project – enabling three-way communication between you, the Labelbox Boost admin, and the labeling workforce. Lastly, you can also rely on shared Google Docs and team syncs to collect feedback from the labeling team.

Labeling instructions and ontology changes

After a project has been completed, you can review project results and labeler performance to pinpoint areas for improving your labeling instructions or ontology.

Questions that you can consider during review include:

- Do the results of the project show any areas of labeling uncertainty that can be improved by editing the task's instructions?

Tip: Creating category definitions that are mutually exclusive can improve labeling team alignment and reduce the chance of confusion between object types.

Tip: Adding numbered steps can be a great way to organize complex labeling tasks.

Tip: Including decision trees and rule of thumb guidance can be helpful for subjective tasks that require critical thinking.

- Do project results reveal opportunities for improving ontology structure?

Tip: Think about if the ontology is organized in the most efficient manner. For instance, can the labeling time can be improved using nested classifications vs having a list of single objects?

Tip: Review whether the ontology includes too many objects or too few objects to achieve project goals.

Tip: If warranted, share the "why" behind your ontology requirements or provide additional project context to help labelers better contextualize tasks.

Improve your data selection

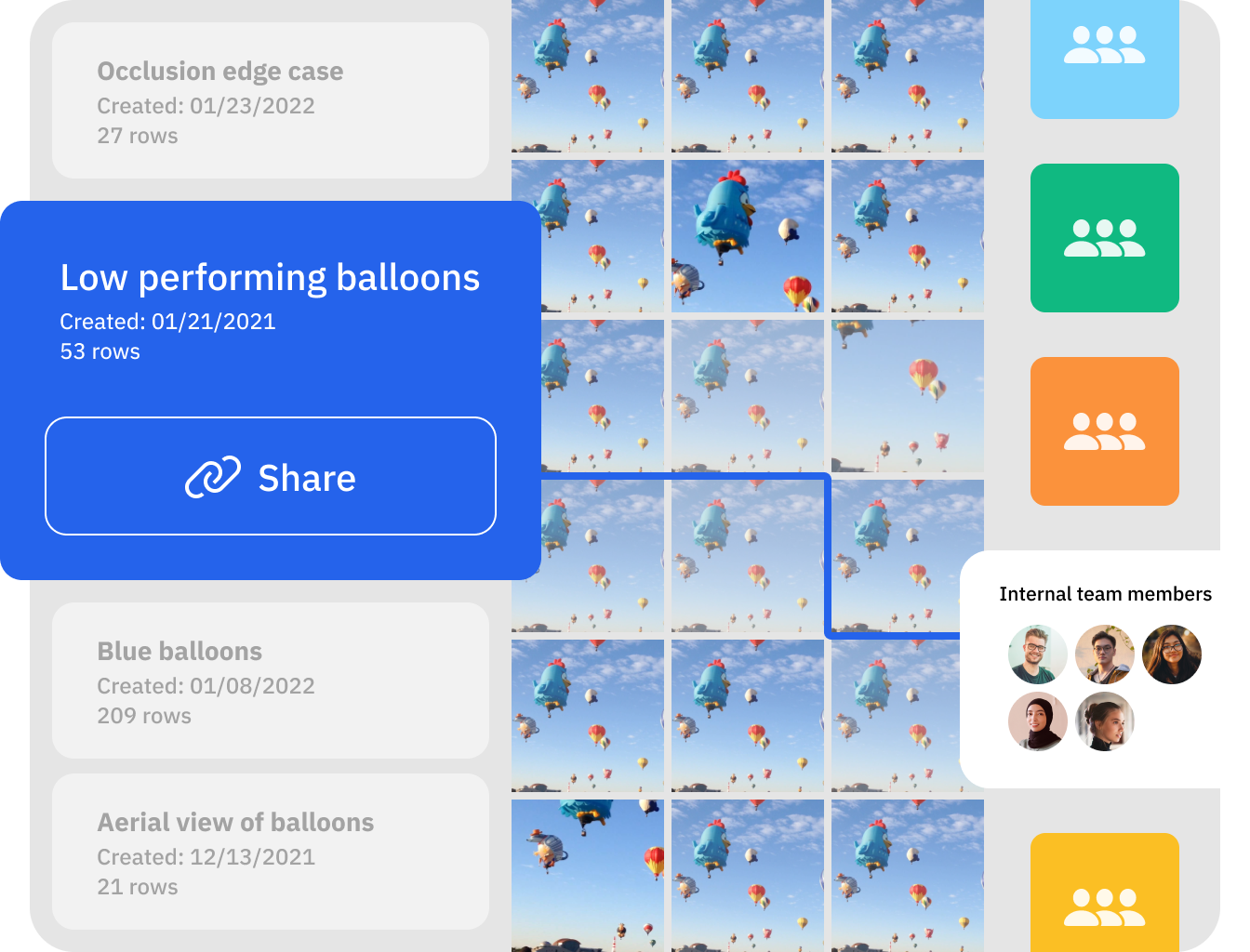

After the successful completion of a labeling project, it can be tempting to proceed by creating projects or batches of even larger datasets to label. However, to make sure that you're training your model on the most impactful examples, you can leverage Labelbox's Catalog and Model to curate and prioritize future batches.

With Catalog and Model, you can quickly identify label and model errors, find all instances of similar data to edge cases or mislabeled data rows and send them to Annotate in order to retrain your model on specific slices of data. Smartly selecting high value data to label in priority is key to maximizing labeling efficiency and reduce project costs.

Refer to the below guides and resources to learn more about how to improve data selection with Labelbox:

- How to search, surface, and prioritize data within a project

- How to prioritize high-value data

- How to find and fix label errors

- How to find and fix model errors

- How to find similar data in one click

Review your quality strategy

One key area to review after the completion of a project is how well your quality assurance strategy performed. ML teams will benefit from asking the following:

- If consensus was used on the project, were there enough votes to provide sufficient insights?

- Was the number of benchmarks used or was the percentage of consensus coverage applied sufficient to assess performance across the dataset?

- Were the benchmark or consensus agreement results as expected, or lower than anticipated?

Tip: If consensus scores are consistently high or do not provide additional valuable insights, consider lowering the number of votes, coverage percentage, or removing altogether to reduce labeling time.

- If using SLAs, how well were expectations and requirements met?

Your team should also assess how well the labeling review process was. If you notice areas for improvement or the need for customization to improve review efficiency, you can customize your review step with workflows.

To learn more about workflows, read our guide, How to customize your annotation review process

Labeling team size and skillset

Evaluating the size and makeup of the labeling team is important at every phase of your labeling operations. At the end of a project, it's recommended that you review whether the labeling team size was too small to meet the desired production capability or too large for the required volume. Additionally, it is key for teams to consider whether future batches of data will require labelers with specialized training (such as having industry-specific or language experience).

Keeping communication open with the labeling team is also crucial during these considerations. This is especially important when working with external labeling teams, who often benefit from having advance notice of any new batches or changing requirements so that they can effectively allocate or maintain their resources.

Clear and timely communication of future project needs, such as the anticipated readiness of the next project or batch, the expected size of the dataset, changes to the instructions or labeling requirements, and ideal completion dates, should be communicated to help ensure a smooth ramp up on the next project.

You can easily access data labeling services with specialized expertise that are fit for your specific use case through Labelbox Boost. Collaborate with the workforce in real-time to monitor high-quality data,, all while managing and keeping human labeling costs to a minimum using AI-assisted tools and automation techniques.

Contact our Boost team to get paired with a specialized workforce team or learn more about how one of our customers, NASA's Jet Propulsion Laboratory, used Labelbox Boost to deliver quality labels at 5x their previous speed.

All guides

All guides