How to find and fix model errors

A great way to boost model performance is to surface edge cases on which the model is struggling. You can fix those model failures with targeted improvements to your training data so that the model becomes better on these edge cases.

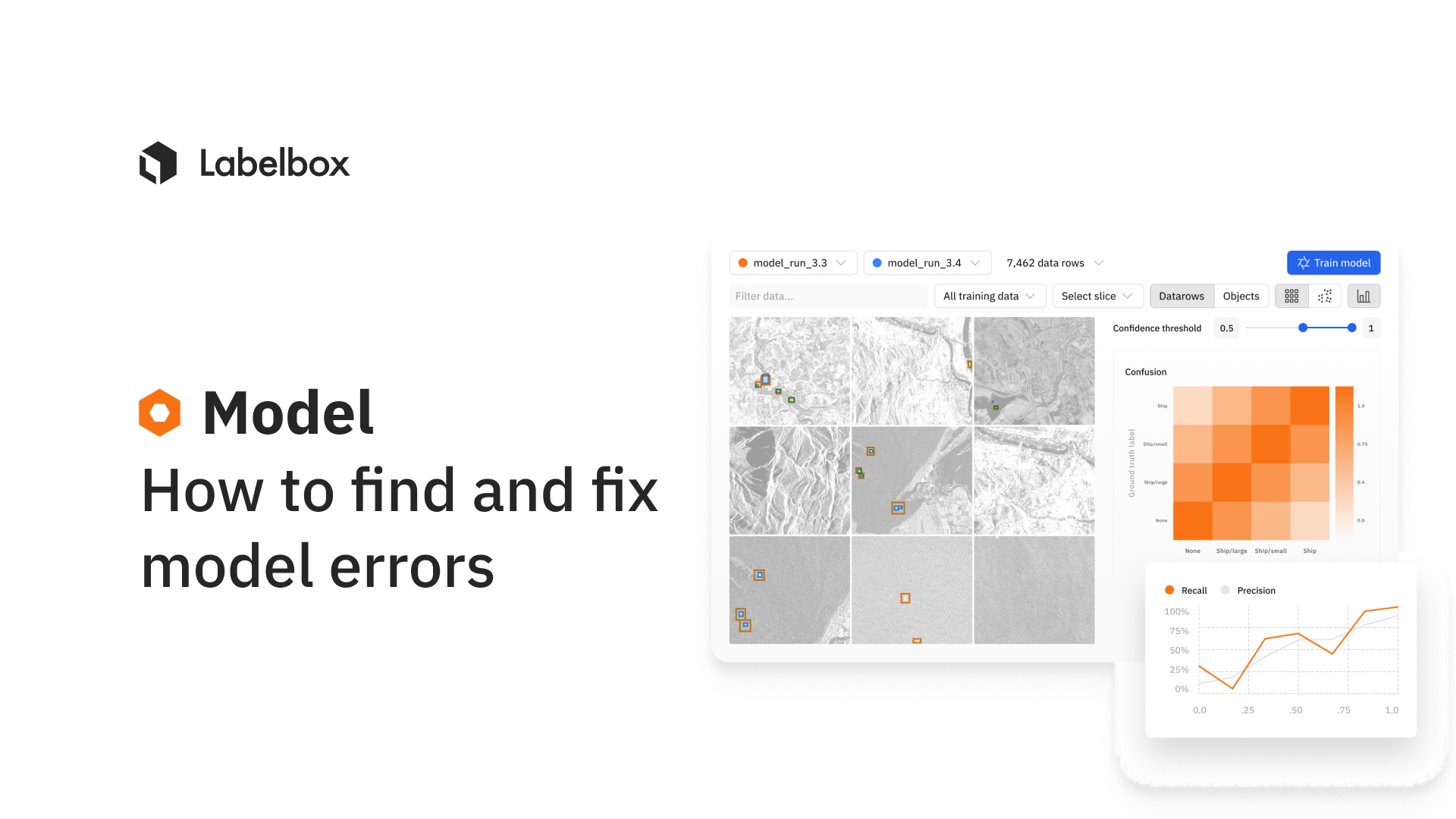

Once you upload your model predictions and model metrics to Labelbox, you can use your trained model as a guide to find model failure and edge cases. With quantitative and visual inspection, you can identify challenging edge cases and then use Labelbox Catalog to find similar unlabeled data to improve your model.

Here's a systematic process that can help teams easily surface and fix model errors:

Step 1: Look for assets where your model predictions and labels disagree – one way to do that is to look at model metrics and surface clusters of data points where the model is struggling.

Step 2: Visualize these challenge cases and identify patterns of model failures. Prioritize the most important model failures to fix.

Step 3: Now that you've identified edge cases that need fixing, the goal is to find unlabeled data points that are most similar to the challenge case – these are high-impact data points that you want to label in priority.

Step 4: When you re-train the model on this newly labeled data, the model will learn to make better predictions on the newly added data points and won't struggle as it did before. In a few steps, you've fixed your model errors and have boosted model performance.

How else can you benefit from a data-engine like Labelbox?

Teams can also drive data-centric iterations by finding and fixing label errors to improve model performance. Learn more about how to find and fix label errors in in this guide our documentation.

All guides

All guides