×![]()

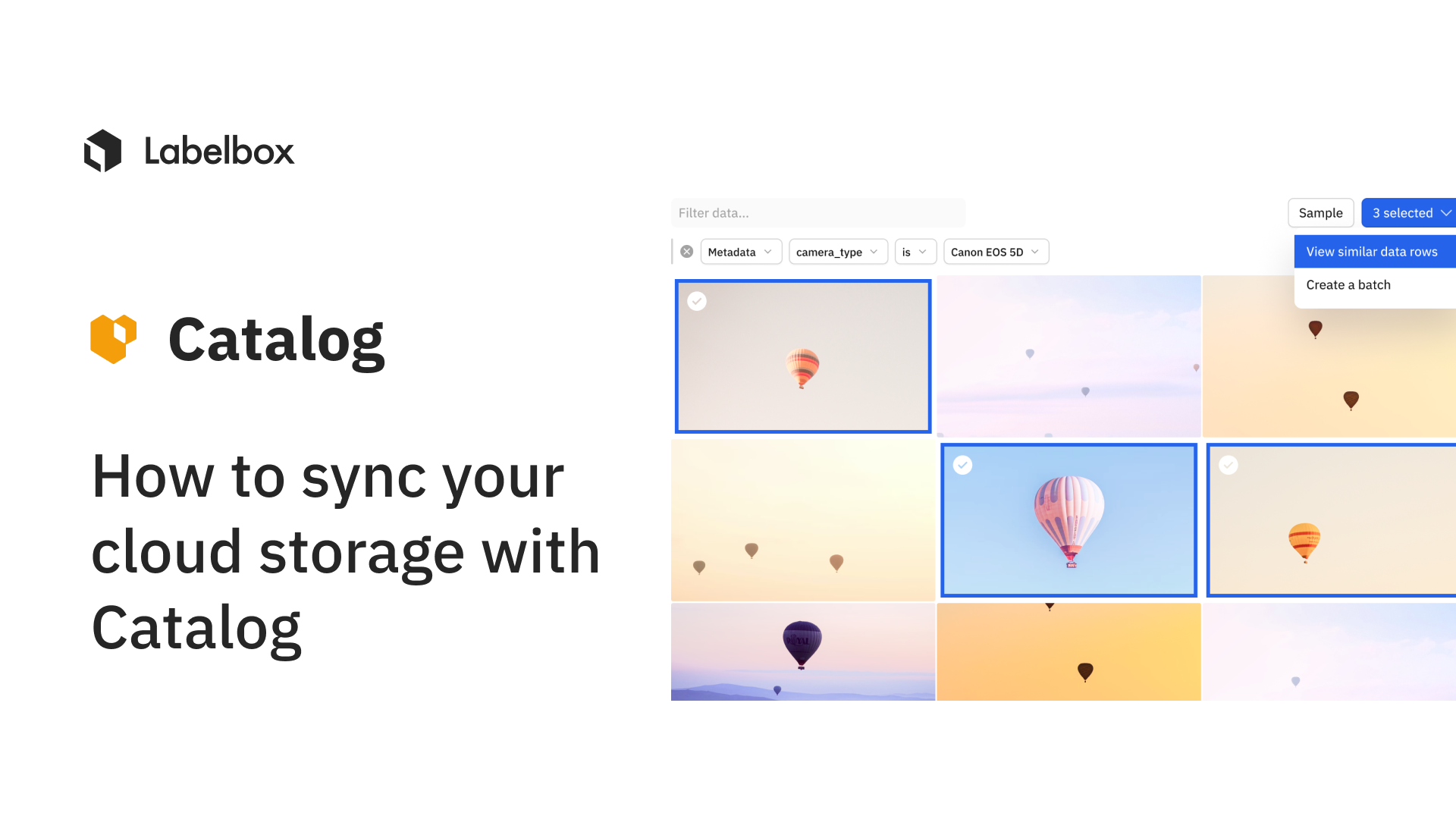

How to sync your cloud storage with Catalog

For many ML teams, a data pipeline that keeps data, such as Data Rows, attachments, and metadata, between their cloud storage bucket and Labelbox Catalog in sync is critical. In this guide, we'll show you how to setup Google Cloud Functions to keep your data in sync. A similar approach can be applied to Amazon S3 and Microsoft Azure.

You can sync your cloud storage with Catalog by using two cloud functions: upload_asset.py, triggered upon uploading a new asset to the bucket, and delete_asset.py, triggered when an asset has been deleted from the bucket.

import labelbox

from labelbox import Client, Dataset

# Add your API key below

LABELBOX_API_KEY = ""

client = Client(api_key=LABELBOX_API_KEY)

def upload_asset(event, context):

"""Uploads an asset to Catalog when a new asset is uploaded to GCP bucket.

If a dataset with bucket_name exists in Catalog, then an asset is added to that dataset. Otherwise, a new dataset is created.

Args:

event (dict): Event payload.

context (google.cloud.functions.Context): Metadata for the event.

"""

file = event

bucket_name = file['bucket']

object_name = file["name"]

datasets = client.get_datasets(where=Dataset.name == bucket_name)

dataset = next(datasets, None)

if not dataset:

dataset = client.create_dataset(name=bucket_name, iam_integration='DEFAULT')

url = f"gs://{bucket_name}/{object_name}"

dataset.create_data_row(row_data=url, global_key=object_name)import labelbox

from labelbox import Client

from labelbox.schema.data_row import DataRow

# Add your API key below

LABELBOX_API_KEY = ""

client = Client(api_key=LABELBOX_API_KEY)

def delete_asset(event, context):

"""Deletes the asset from Catalog when the asset is deleted from GCP bucket using global key.

Args:

event (dict): Event payload.

context (google.cloud.functions.Context): Metadata for the event.

"""

file = event

# Sets the file's name as global key

global_key = file["name"]

res = client.get_data_row_ids_for_global_keys(global_key)

if res["status"] == "SUCCESS":

delete_datarow_id = res["results"][0]

data_row = client._get_single(DataRow, delete_datarow_id)

data_row.delete()

else:

print("Global key does not exist")

You can also learn more about how Catalog can help you curate your unstructured data with precision in our documentation.

All guides

All guides