Better gait and posture classification using sensors in individuals with mobility impairment after a stroke

Summary: Researchers from the University of Zurich recently developed and validated a solution for better monitoring the gait and posture of individuals who have suffered a stroke via sensors.

Challenge: Stroke leads to motor impairment which reduces physical activity, negatively affecting social participation, and increasing the risk of secondary cardiovascular events. Continuous monitoring of physical activity with motion sensors is promising because it allows the prescription of tailored treatments in a timely manner. Accurate classification of gait activities and body posture is necessary to extract actionable information for outcome measures from unstructured motion data.

Findings: Their method achieved enhanced performance when predicting real-life gait versus non-gait (Gait classification) with an accuracy between 85% and 93% across sensor configurations, using SVM and LR modeling.

On the much more challenging task of discriminating between the body postures lying, sitting, and standing as well as walking, and stair ascent/descent (Gait and postures classification), their method achieved accuracies between 80% and 86% with at least one ankle and wrist sensor attached unilaterally.

This research will hopefully prove useful resource to other researchers and clinicians in the increasingly important field of digital health in the form of remote movement monitoring using motion sensors.

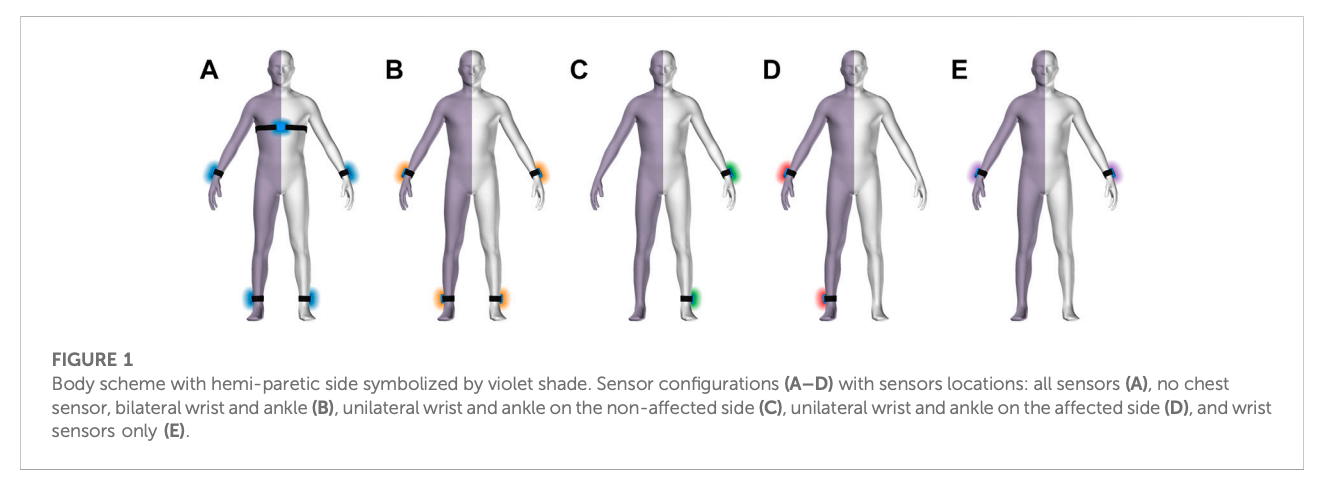

How Labelbox was used: Video and movement sensor data (locations: wrists, ankles, and chest) were collected from fourteen stroke survivors with motor impairment who performed real-life activities in their home environment. All video data was then labeled using the Labelbox platform for five classes of gait and body postures and three classes of transitions that served as ground truth. Afterwards, they trained support vector machine (SVM), logistic regression (LR), and k-nearest neighbor (kNN) models to identify gait bouts only or gait and posture. Model performance was assessed by and compared across five different sensor placement configurations.

Video data was recorded with a frame-rate of 30 fps, whereas time-series from the IMU was collected with 50 fps. A single experimenter labeled videos in a frame-by-frame manner, and the labels were subsequently resampled to match the frequency of the synchronized IMUs. For quality assessment, a random sample of 33.3% of data was labeled by a second experimenter.

Labeling criteria were defined for start-to-end conditions of three body postures (lying down, sitting, standing) and two gait types (walking and stair ascent/descent). Additionally, they annotated three transition labels between two corresponding posture or gait types (lying down/sit, sit/stand, stand/walk) without specifying directionality (e.g., sit-to-stand or stand-tosit). This labeling framework resulted in a discrete label for every frame of the recording.

You can read the full PDF here.

All posts

All posts