How to customize your annotation review process

Higher-quality data results in higher-quality models. However, obtaining high-quality data can be challenging. Every ML engineer or labeling operations manager often faces a recurring challenge – how to complete large, complex training data projects in a systematic way.

There are so many variables and decision trees, that it can be challenging to envision the most efficient way to structure, label, and QA your projects. While teams have had to traditionally rely on weekly team meetings or other manual methods, we built Workflows – the ultimate orchestration tool for your training data cycles.

What is Workflows?

Custom workflows can help optimize how labeled data gets reviewed across multiple tasks and reviewers. Workflows is a new feature that allows teams the flexibility to tailor their review workflows for faster iteration cycles.

Teams can set up dynamic workflows based on attributes, such as annotation type or labeler, to reduce costs and increase labeling and review throughput, quality, and efficiency.

Why are workflows important?

Workflows works in conjunction with Batches and the Data Rows tab to enable a more highly customizable, step-by-step review pipeline.

At a glance, Workflows allows you to easily understand the state of your data within your labeling operations pipeline. Rather than relying on manual spreadsheets or ad-hoc methods, you have a systematic way of understanding how many data rows are ready for model training, how many are currently in-review, how many are being re-labeled, and how many haven’t been labeled yet.

By using Workflows, teams have more granular control over how data rows get reviewed – teams can use Workflows to customize their review pipeline to drive efficiency and automation into their review process.

Designed to help save your team labeling time and cost, Workflows enables you to:

- Easily understand the status of your data within your labeling operations pipeline

- Have multiple groups, like your core labeling team and subject matter experts, review labeled data in a specific manner by configuring custom review steps

- Select labels for rework individually or by bulk with minimal manual work

- Create ad-hoc review steps to ensure quality

- View a Data Row's history with the audit log for greater context

Customize and set up your project’s review task

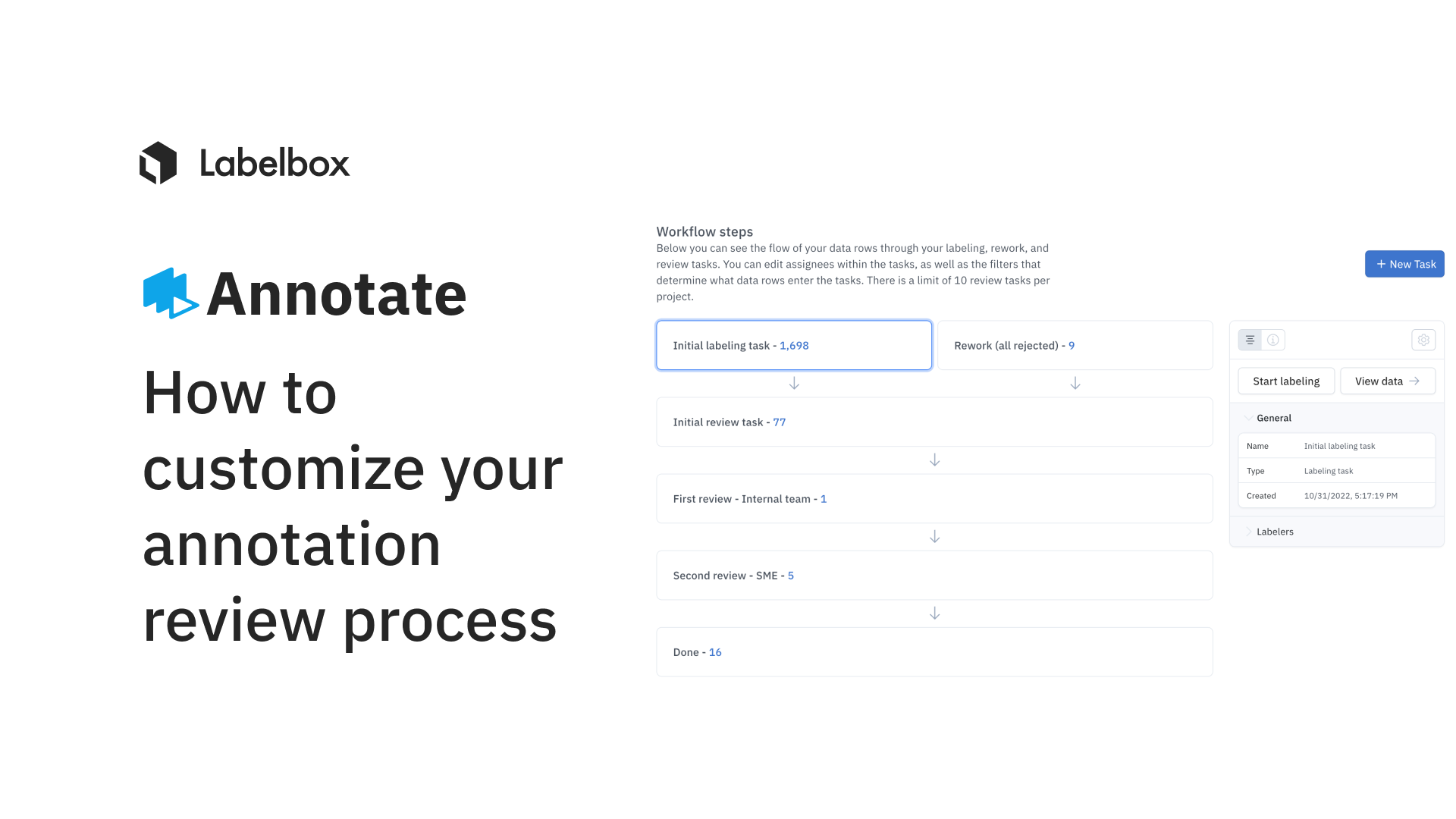

By default, there are three tasks that appear when you create a new project:

- Initial labeling task: reserved for all data rows that have been queued for labeling

- Initial review task: reserved for all data rows that are currently in the first review step

- Rework task: reserved for data rows that have been Rejected

- Done task: reserved for data rows that have a) moved through their qualified tasks in the workflow or b) did not qualify for any of the tasks

You can have up to 10 review tasks within a workflow.

How it works with a project’s Overview page

The new overview page provides teams with a holistic view of where your data rows are in the labeling pipeline.

At a glance, you’re able to understand which data rows can be used downstream. For a deeper dive, you can click on a stage to view all data rows in the filtered state in the Data Rows tab.

Move Batches between stages of your workflow

Batches are a collection of data rows that are queued from Catalog and added to your labeling project. They are critical in enabling faster data-centric iterations and in helping unlock active learning workflows to improve label or model errors.

In the Data Rows tab, you can filter by batch or annotation to send subsets of your data to 'Rework' in bulk. You can also send bulk data rows to different stages of your workflow.

Better collaborate and create ad-hoc review tasks based on project needs

Based on project progress or ad-hoc needs, you can create a new review task. This allows you to modify your workflow based on feedback from a Labeling Operations Manager or based on your team’s labeling performance.

Another reason why teams might want to create an ad-hoc review task is based on subject-matter expert review. Teams can add a review step and set parameters to maximize what gets reviewed at this stage in order to maximize the reviewer’s time and cost.

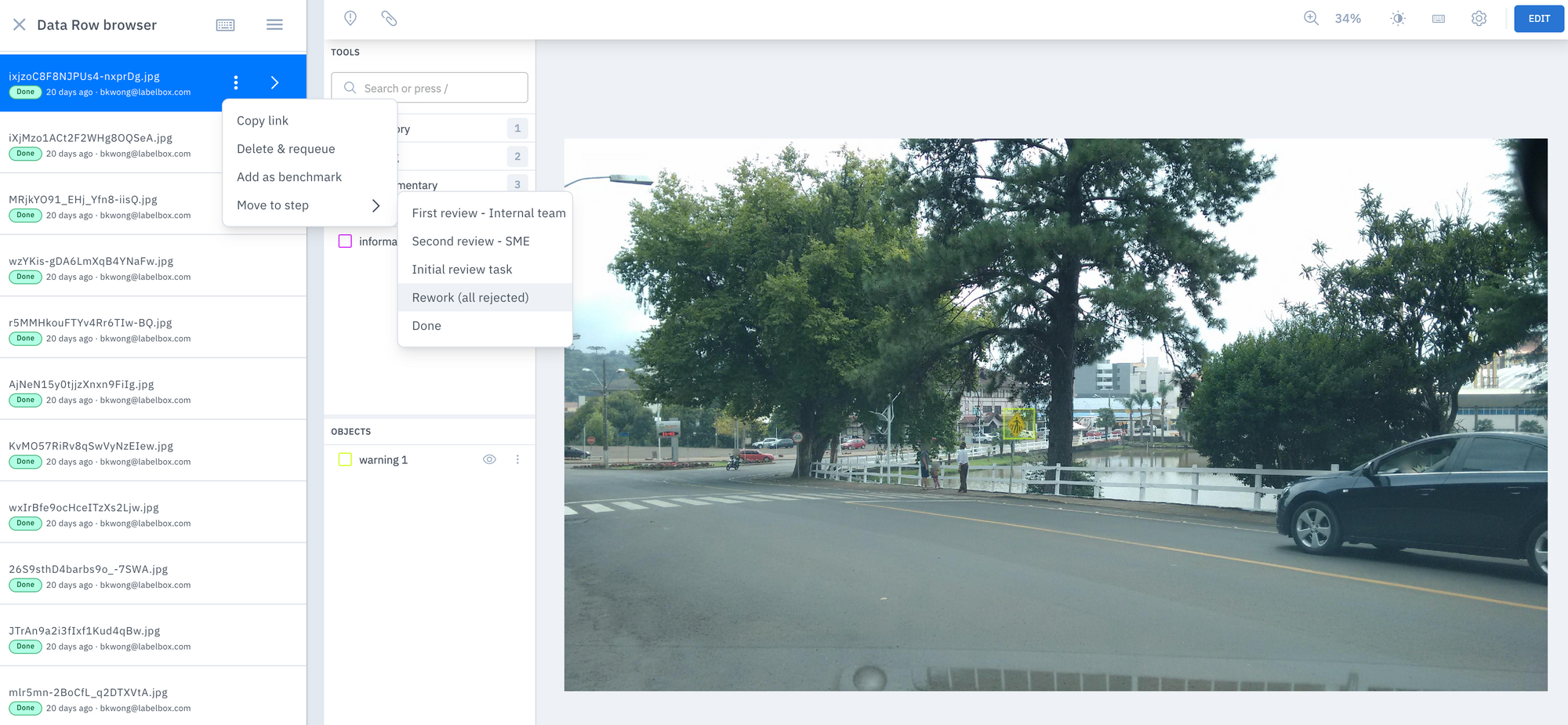

Teams can also send a data row to a specific review task from the Data Row browser for ad-hoc review. For example, if you find some poorly labeled data rows that you want to send to re-work, you can do so directly from the Data Row browser.

Easily review your data & automatically send incorrect labels to be fixed

When reviewing your data rows, you can easily approve or reject data rows based on if it's a Benchmark-based project or a Consensus-level batch.

Approving a data row: Data rows that have been approved will be automatically moved to the next review task in your queue. Data rows that are in the "Done" step give you a real-time, high-level view of how much of your data is ready to incorporate into your model.

Rejecting a data row: Data rows that have been rejected will be automatically sent to “Rework” for correction. You can mark the issue and leave a comment as to why the label was rejected – allowing your labelers to understand and correct any labeling errors.

How to approve / reject data rows for a Benchmark-based project

How to approve / reject data rows for a Consensus-based project

Better understand your project’s data lineage to iterate & provide feedback

With large complex projects, it can be hard to manage and keep track of a Data Row’s lifecycle.

A multi-step review workflow means that a Data Row can travel between different stages of the workflow. You can quickly do spot checks with the audit log to better understand how a Data Row has ended up in a particular stage of your workflow. This helps ensure that no Data Row has skipped an important step of the review process and can give you a clear picture of how a Data Row has ended up from “to Label” to “Done”.

This ability to track the journey of a Data Row through a review cycle can help teams make more informed decisions on how to make edits to their review workflow for future iterations.

Workflows FAQ

What replaces thumbs up / thumbs down voting?

For all new projects, thumbs up / thumbs down voting will be replaced by the approve / reject workflow. In review tasks, the following actions can be taken:

Benchmark

Approve: Reviewer will approve a data row

- This will move the data row to quality for the next task in the Workflow

- In case there is only a single review task (such as the "Initial review task" that Labelbox automatically creates), the data row will end up in "Done".

Reject: Reviewer will reject a data row

- This will move the data row to the Rework task

Consensus

Approve: Reviewer will choose a winner label to approve the data row

- This will move the data row to quality for the next task in the Workflow

- In case there is only a single review task (such as the "Initial review task" that Labelbox automatically creates), the data row will end up in "Done".

Reject: Reviewer will reject a data row, essentially rejecting all labels on that data row

- This will move the data row to the "Rework" task

- In "Rework", any labeler or reviewer can modify all labels associated with the data row

You can also use the 'Move to step' functionality for ad-hoc review.

How do you keep track of the journey of a Data Row?

Workflows allows you to see the journey of your Data Rows as they move from 'To label' to 'Done' through your customized review steps.

With the audit log, admins can view the entire set of events that happened on a Data Row after it was labeled, allowing you to understand the complete journey of each Data Row. This can be especially helpful for when teams need to investigate the review history of a particular data row or a set of Data Rows.

How do you know when a data row is ready to be used for model training?

Data Rows that appear in the 'Done' stage of your workflow can be used for model training. The 'Done' stage signifies that the Data Row has passed through all necessary review steps, giving you a real-time, high-level view of how much of your data is ready to be used for model training.

How do you ensure that you’re only reviewing the most important assets?

This will likely depend on your particular workflow, but teams can use filters in the Data Rows tab to surface specific data rows of interest for review.

Multi-step review workflows can give teams the flexibility to review Data Rows at a specific step of the review process. Rather than having to review or sort through all of your data rows, the Data Row tab gives teams a holistic look into all the review steps within a project’s workflow

You can read more about this in the Data Rows tab guide.

Can I use the Rework task instead of Delete-and-requeue?

'Delete and requeue' will still be supported for a little while longer. Eventually, the 'Rework' step of your Workflow will replace Delete-and-reqeueue. Labelbox is currently working on parity between the asynchronous functionality in Rework tasks and 'Delete and requeue'.

All guides

All guides