How to use AI to improve website search relevance

With the latest advances in foundation models, organizations can now enhance search relevance for websites by better matching between user intent with product listings. While companies now have access to a wealth of search queries, sifting through all of these search results can be incredibly time-consuming and resource-intensive. By leveraging AI, teams can now analyze search queries and feedback at scale, to gain insights into common topics or customer sentiment. This allows businesses to identify common themes and pinpoint areas of improvement to enhance their overall website experience to maximize for key metrics such as user retention, conversion and revenue.

However, businesses can face multiple challenges when implementing AI for search relevance. This includes:

- Data quality and quantity: Improving search relevance requires a vast amount of data in the form of search queries and accurate product descriptions. Orchestrating data from various sources can not only be challenging to maintain, but even more difficult to sort, analyze, and enrich with quality insights.

- Dynamic review landscape: The changing nature and format of customer review data from multiple sources (e.g webpages, apps, social media, etc.) poses the challenge for businesses to account for continuous data updates and re-training needs.

- Cost & scalability: Developing accurate custom AI can be expensive in data, tools, and expertise. Leveraging foundation models, with human-in-the-loop verification, can help accelerate model development by automating the labeling process.

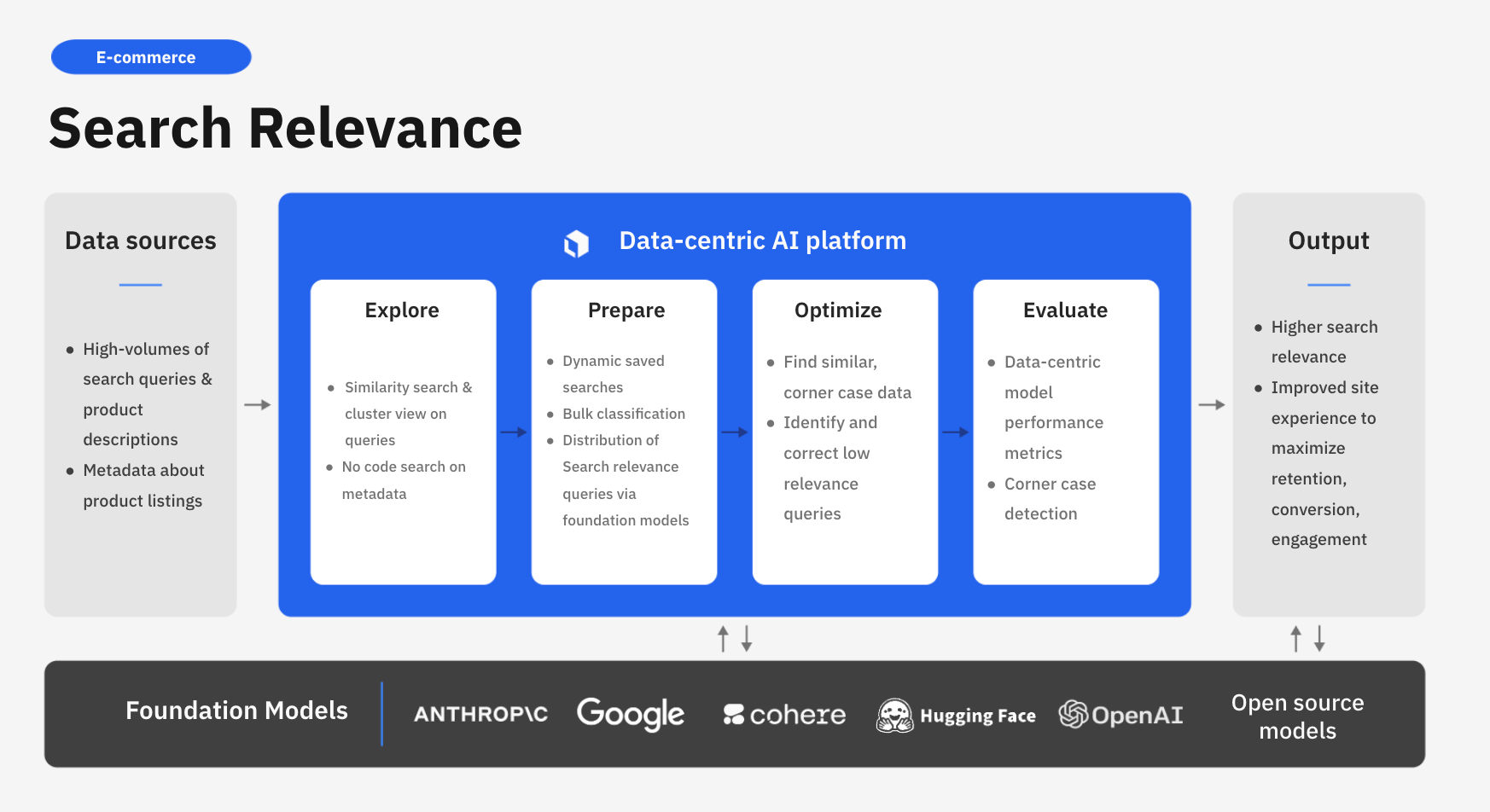

Labelbox is a data-centric AI platform that empowers businesses to transform their website search relevance for product descriptions and listings. Instead of relying on time-consuming manual reviews, companies can leverage Labelbox’s assisted data enrichment and flexible training frameworks to quickly build AI systems that uncover actionable insights from customer searches.

In this guide, we’ll walk through how your team can leverage Labelbox’s platform to dramatically improve search relevance for any website or app. Specifically, this guide will walk through how you can explore and better understand search query topics and classify product descriptions/listings to make more data-driven business decisions around the customer experience.

See it in action: How to use AI to improve search relevance for your website

The walkthrough below covers Labelbox’s platform across Catalog, Annotate, and Model. We recommend that you create a free Labelbox account to best follow along with this tutorial.

Part 1: Explore and enhance your data

Part 2: Create a model run and evaluate model performance

You can follow along with both parts of the tutorial below in either:

Part 1: Explore and prepare your data

Follow along with the tutorial and walkthrough in either the Google Colab Notebook. If you are following along, please make a copy of the notebook.

Ingest data into Labelbox

As customer queries and product descriptions across channels proliferate, brands want to learn from customer feedback to build the most user-friendly experience on their website or app. For this use case, we’ll be working with a dataset of e-commerce website queries – with the goal of analyzing the queries to demonstrate how a company could gain insight into how their customers search for products and how to optimize for relevance.

The first step will be to gather data:

For the purpose of this tutorial, we’ve provided a sample open-source Kaggle dataset that can be downloaded.

Please download the dataset and store it in an appropriate location on your environment. You'll also need to update the read/write file paths throughout the notebook to reflect relevant locations on your environment. You'll also need to update all references to API keys, and Labelbox ontology, project, and model run IDs

- If you wish to follow along and work with your own data, you can import your text data as a CSV.

- If your text snippets sit as individual files in cloud storage, you can reference the URL of these files through our IAM delegated access integration.

Once you’ve uploaded your dataset, you should see your text data rendered in Labelbox Catalog. You can browse through the dataset and visualize your data in a no-code interface to quickly pinpoint and curate data for model training.

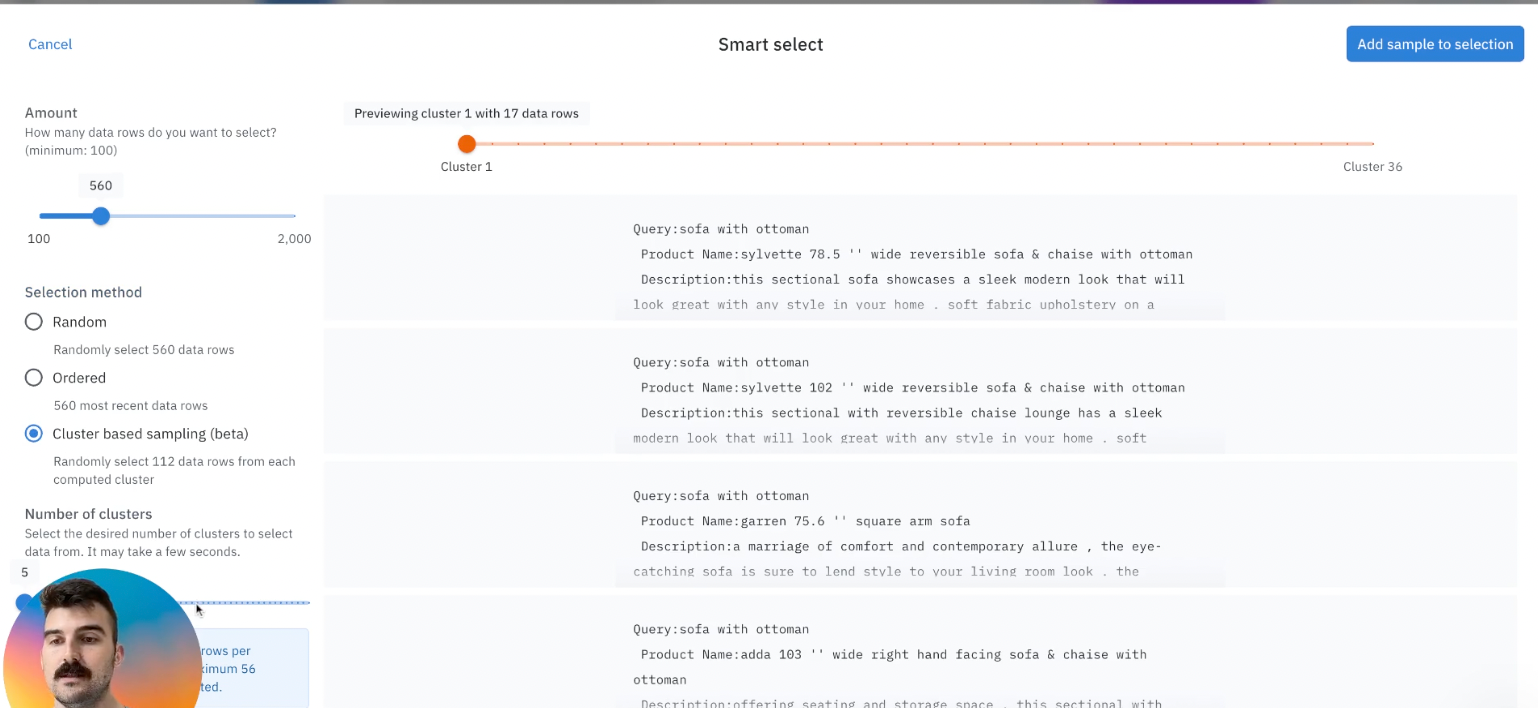

Search and curate data by clustering

You’ll now be able to see your dataset in Labelbox Catalog. With Catalog, you can contextualize your data with custom metadata and attachments to each asset for greater context.

Explore topics of interest

With your data in Labelbox, you can begin to leverage Catalog to uncover interesting topics to get a sense of what customers are searching for.

- Visualize your data – you can click through individual data rows to get a sense for which queries are the most popular.

- Drill into specific topics of interest – leverage natural language search, for example searching “beds, mirrors, etc,” to bring up all related queries related to that topic. You can adjust the confidence threshold of your searches accordingly which can be helpful in gauging the volume of data related to the topic of interest.

- Easily find all instances of similar examples to data of interest.

Part 2: Create an initial model run of search relevance assessments

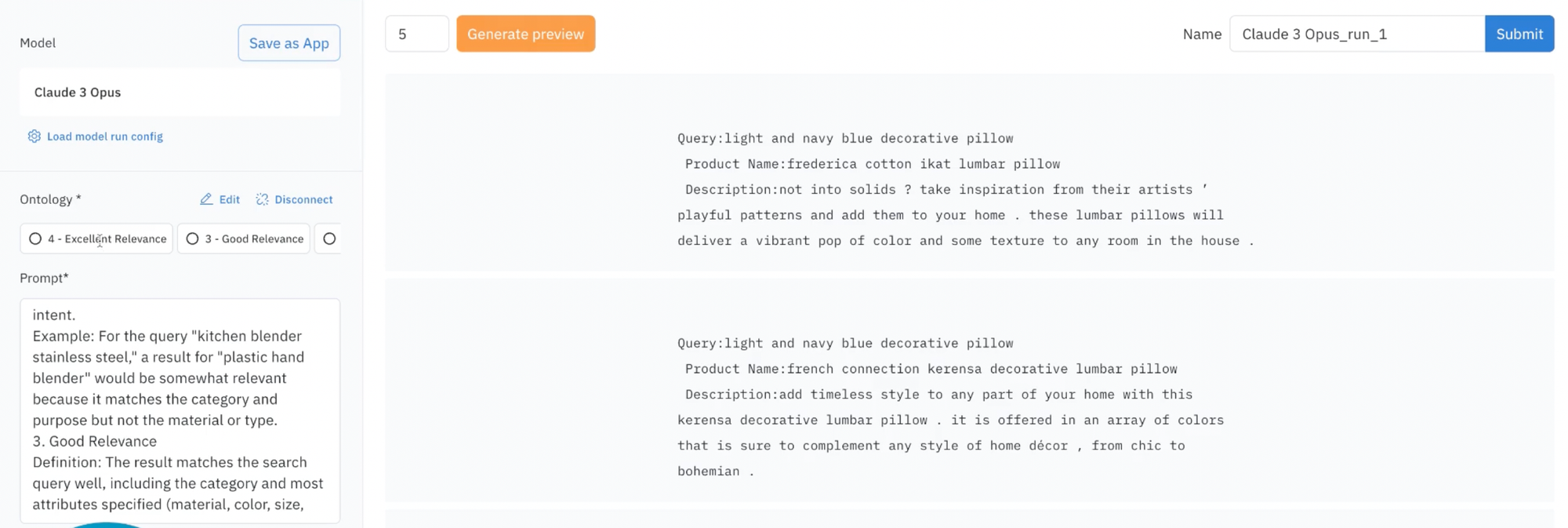

After we’ve explored our data, we now have a better understanding of what topics exist in our dataset and can proceed to using Labelbox's Foundry product to model run an initial model run to accelerate search relevance assessments.

- First, you'll need to set up your ontology for search relevance assessment based on your project's requirements.

- Afterwards, you can define the criteria for rating the relevance of search results to each type of query.

- Next, you can communicate the business definition of relevance to the models directly into the prompt. You can use Foundry to add context to the prompt, allowing it to rank results as if it were part of your respective business. In this example, we'll include the prompts for what "good relevance", "excellent relevance", etc and help the model predict what would fit under this criteria.

As an illustrative example, you can set up "excellent relevance" as a result that perfectly matches the search query, including all specific attributes (category, material, color, purpose, etc). This indicates that the term is exactly what the user is searching for. For the query, "kitchen blender stainless steel", a result for "stainless steel countertop blender" is highly relevant, matching the user's intended category.

Generate an initial preview to assess how well the adjusted prompt performs and you can save the adjusted prompt as an app, including data type (text), ontology, and the original prompt. This allows for easy re-use and the ability to build upon the saved app for future assessments of search relevance criteria.

After this has been set up, you can now generate the next preview to ensure quality before submitting the model run for assessments.

View search relevance assessments results

- Once your model inferencing job has been completed, you can then navigate to the model tab and locate a variety of foundation models (e.g., Claude 3 will be used in this tutorial) to view the completed model run with your rankings.

- Optionally, you can add an explanation classification or review the results of the ranking, which includes all 560 items/data rows.

- By adding the results to your project, you can next perform further analytics such as analyzing the distributions of predictions from the Metrics view.

- Next, select all items and you can send them to Annotate, and choose "search relevance assessment", where you'll then be able to have humans review as an additional quality check.

Further analyze and optimize your search relevance assessments results

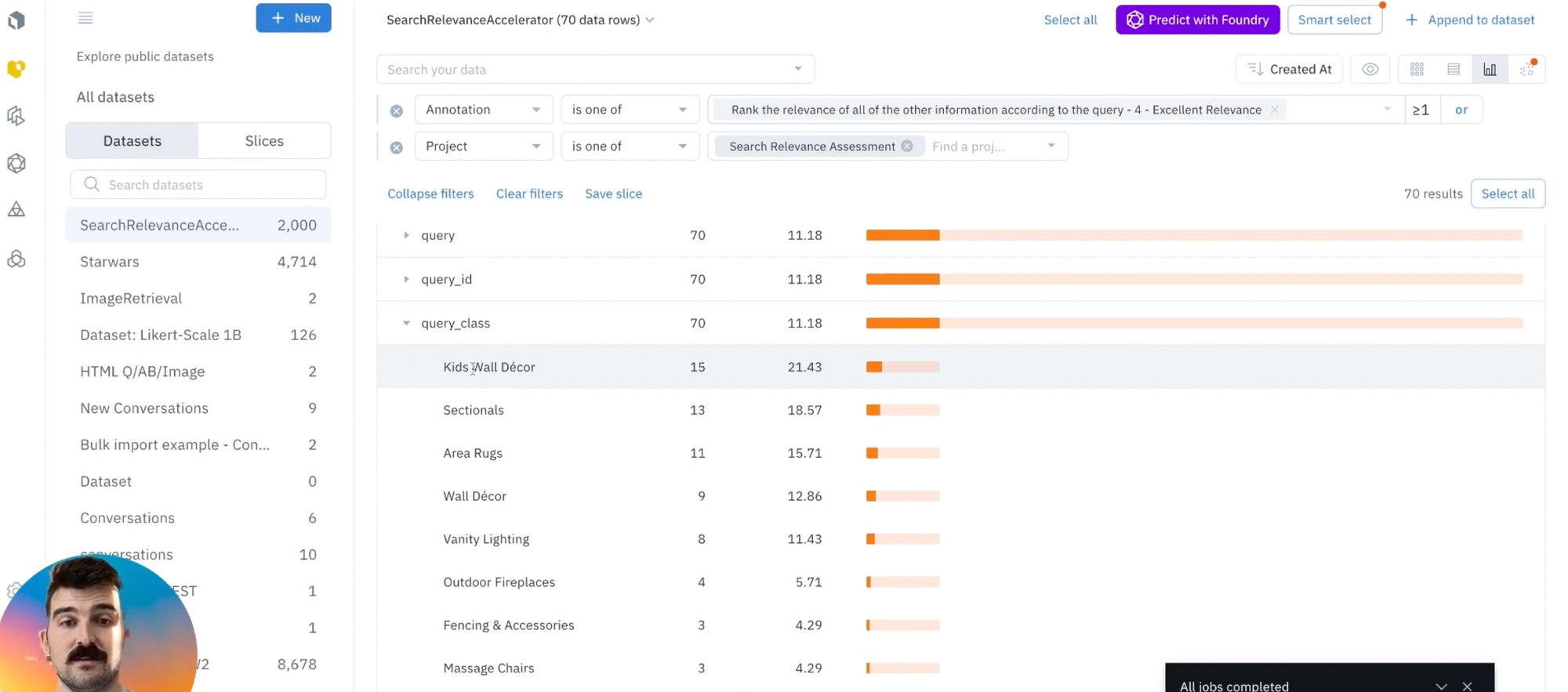

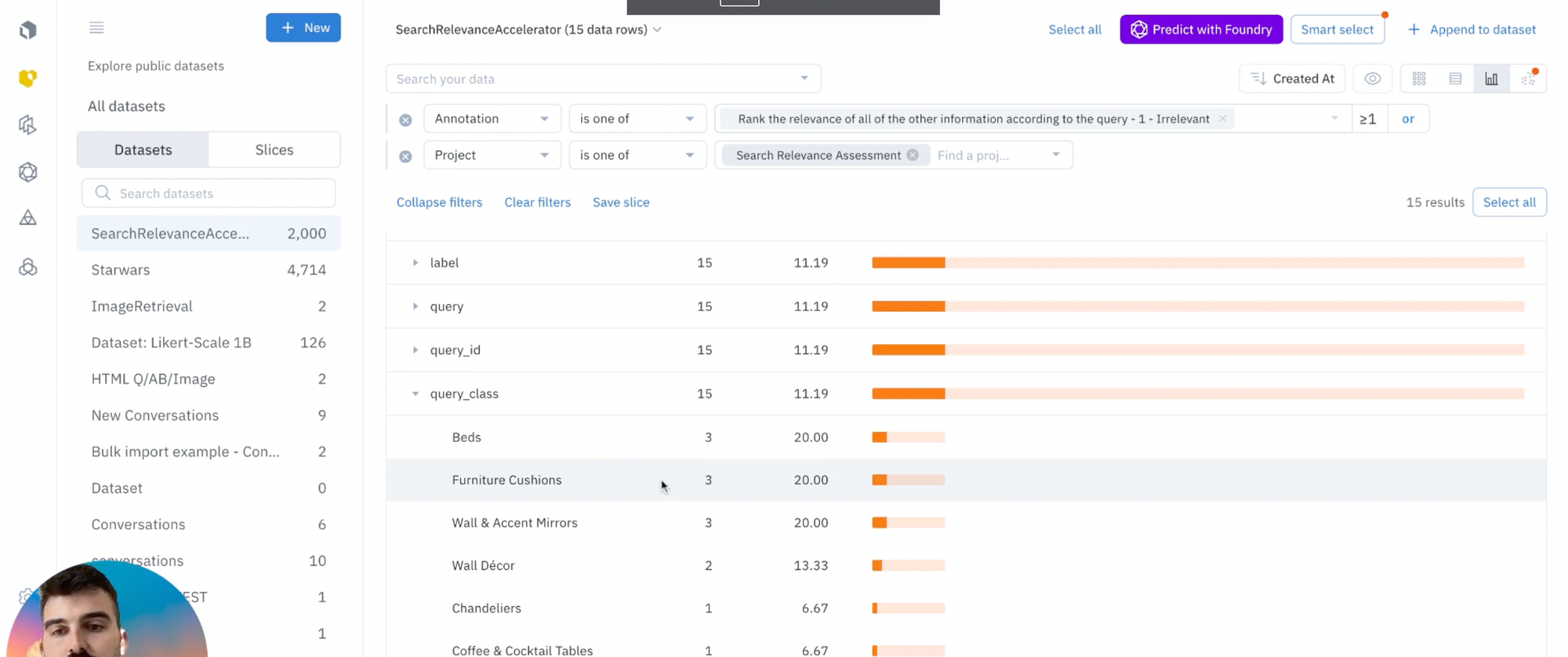

- The last part of the walkthrough is to analyze your distribution of relevance categories within your project, noting varying levels of relevance and to review the query class for all 560 data rows to identify trends in relevance. You can do this by using automated approaches to understand query types and relevance patterns, as we show in the video above.

- By filtering your dataset by the search relevance assessment project, you can navigate to the Analytics view to identify trends and examples of excellent relevance and poor relevance within specific query classes (as shown below).

- As this time, you can consider adjusting prompts to accurately reflect relevance criteria, or use metadata fields, such as query class, to further analyze relevance.

To further evaluate and enrich the data, teams can also explore incorporating human supervision in the labeling process, with a hybrid or combination approaches: fully automated, half human in the loop, half automated, or all human-in-the-loop.

With Labelbox, you can improve your data further in the following ways:

1) Internal team of labelers: your team can start labeling directly in the Labelbox editor, utilizing automation tools and maintaining quality with custom workflows to maintain human-in-the-loop review.

2) External team of expert labelers with Alignerr: Leverage our global network of specialized labelers for a variety of tasks. This community of subject matter experts from several disciplines align AI models by creating high-quality data in their field of expertise. The community spans nearly every major discipline of sciences, industries and languages, worldwide.

By tapping into the most recent developments in foundation models, businesses can transform the effectiveness of website searches by refining the alignment between user intent and product offerings. Given the abundance of search queries that a prospective customer may use, the process of sorting through them manually is labor-intensive and time-consuming.

By harnessing the power of AI, organizations can efficiently examine search queries and feedback on a large scale, uncovering recurring themes and gauging customer sentiment.

This enables enterprises to detect prevalent trends and target areas for enhancement, allowing them to optimizing the overall website experience to drive key metrics like user retention, conversion rates, and revenue. Remember to optimize the website content as well to ensure it's meeting your end user's goals. Give the walkthrough a try and we also recommend checking out our other solution accelerators such as personalized experiences for retail to improve customer experiences.

Labelbox is a data-centric AI platform that empowers teams to iteratively build powerful search relevance websites. To get started, sign up for a free Labelbox account or request a demo.

All guides

All guides